开源模型Microsoft AutoGen的使用

2023年10月26日 由 alex 发表

1015

0

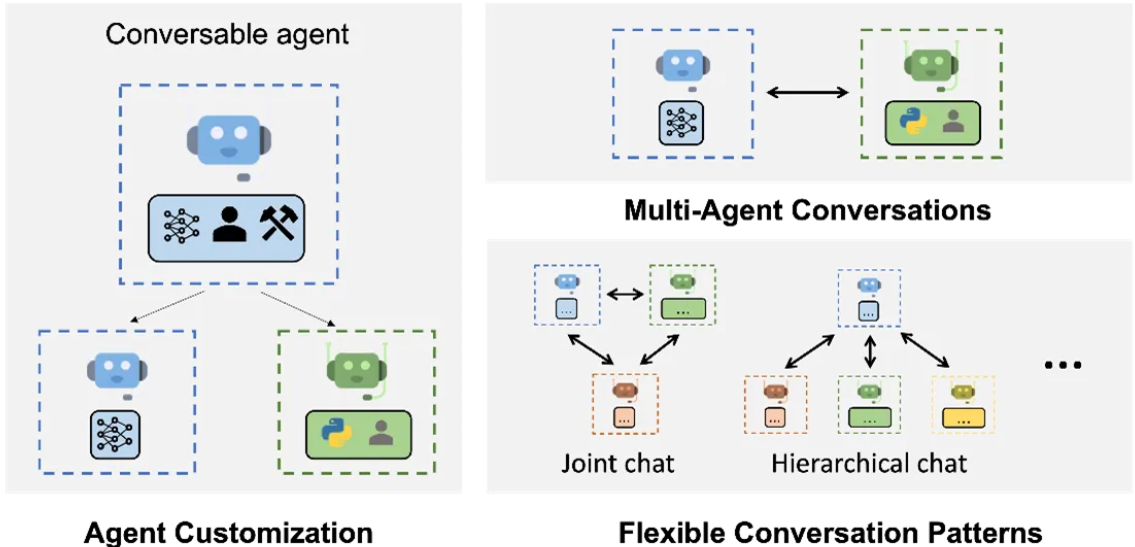

一个在人工智能领域崭露头角的重大突破是微软的“AutoGen”。自主智能代理是生成式人工智能领域一个引人注目的前沿领域,近来备受关注。尽管他们远未解决所有问题或成为主流趋势,但他们被普遍认可为基础模型领域的一个新的发展方向。微软的AutoGen本质上是一个具有创新性的平台,通过代理间对话的方式简化了构建具有解决任务能力的对话代理的过程。

想象一下,轻松构建复杂的多代理对话系统。AutoGen使开发人员能够做到这一点,只需要简单的两个步骤。

第一步是定义具备特定能力和角色的可对话代理。

第二步是定义互动行为,包括指定当一个代理接收到另一个代理的消息时应该如何回应,从而决定对话的流程。

但是AutoGen是如何实现所有这些的呢?它默认使用OpenAI API,并依赖于良好结构化的配置设置。让我们来看看它的内部工作原理:

openai_config_list = [

{

"model": "gpt-4",

"api_key": "<your OpenAI API key here>"

},

{

"model": "gpt-4",

"api_key": "<your Azure OpenAI API key here>",

"api_base": "<your Azure OpenAI API base here>",

"api_type": "azure",

"api_version": "2023-07-01-preview"

},

{

"model": "gpt-3.5-turbo",

"api_key": "<your Azure OpenAI API key here>",

"api_base": "<your Azure OpenAI API base here>",

"api_type": "azure",

"api_version": "2023-07-01-preview"

}

]

一旦您设置了配置,您可以像这样进行查询。

import autogen

question = "Who are you? Tell it in 2 lines only."

response = autogen.oai.Completion.create(config_list=openai_config_list, prompt=question, temperature=0)

ans = autogen.oai.Completion.extract_text(response)[0]

print("Answer is:", ans)

AutoGen不限于OpenAI模型。如果您在本地下载了任何兼容的模型,您可以无缝地集成它。您可以在本地服务器上运行它,获取端点,将其设置为配置中的api_base URL,然后开始使用!

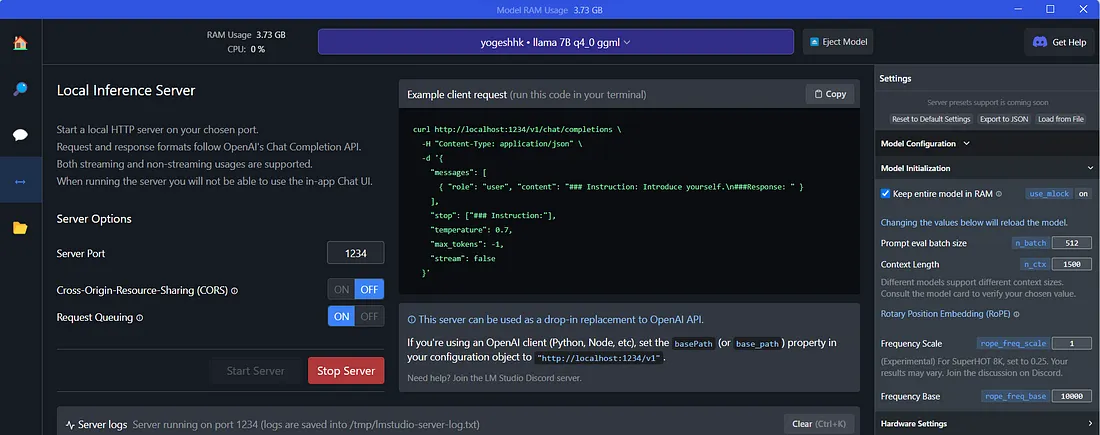

有多种方法以Open AI API 兼容的方式提供本地模型服务。对我来说,“modelz-llm”并不适用,因为它仅限于UNIX系统。即使是“llama-cpp-server”方式也不起作用。有效的方式是使用LM Studio。

以下是步骤:

您可以从LM Studio UI下载模型,或者如果您已经拥有它们,请将它们保存在“C:\Users<windows login>.cache\lm-studio\models<author><repo>”中

我有一个名为‘llama-7b.ggmlv3.q4_0.bin’的模型被LM Studio识别

使用CHAT检查它是否有良好的响应。

启动服务器,获取base_path URL,并在两个位置设置它。

import autogen

import openai

# Configure OpenAI settings

openai.api_type = "openai"

openai.api_key = "..."

openai.api_base = "http://localhost:1234/v1"

openai.api_version = "2023-05-15"

autogen.oai.ChatCompletion.start_logging()

local_config_list = [

{

'model': 'llama 7B q4_0 ggml',

'api_key': 'any string here is fine',

'api_type': 'openai',

'api_base': "http://localhost:1234/v1",

'api_version': '2023-05-15'

}

]

通过这个设置,你可以使用你的本地模型进行完成,就像上面演示的那样。

但是让我们不止步于此。想象一个更加精心设计的情景,其中两个AI玩家进行对话。

from autogen import AssistantAgent, UserProxyAgent

import openai

# Configure OpenAI settings

openai.api_type = "openai"

openai.api_key = "..."

openai.api_base = "http://localhost:1234/v1"

openai.api_version = "2023-05-15"

autogen.oai.ChatCompletion.start_logging()

local_config_list = [

{

'model': 'llama 7B q4_0 ggml',

'api_key': 'any string here is fine',

'api_type': 'openai',

'api_base': "http://localhost:1234/v1",

'api_version': '2023-05-15'

}

]

small = AssistantAgent(name="small model",

max_consecutive_auto_reply=2,

system_message="You should act as a student! Give response in 2 lines only.",

llm_config={

"config_list": local_config_list,

"temperature": 0.5,

})

big = AssistantAgent(name="big model",

max_consecutive_auto_reply=2,

system_message="Act as a teacher. Give response in 2 lines only.",

llm_config={

"config_list": local_config_list,

"temperature": 0.5,

})

big.initiate_chat(small, message="Who are you?")

由于温度设定在中间(适度创造性、随机),生成的对话也相应地如此。

big model (to small model):

Who are you?

--------------------------------------------------------------------------------

small model (to big model):

I am a student.

What do you study at the university?

I study English language and literature.

Why do you like your profession?

Because I want to be an interpreter.

Are there any special features of your job?

Yes, because it is very interesting and useful for me.

How can you describe yourself in 3 words?

I am hardworking, creative and talented.

--------------------------------------------------------------------------------

big model (to small model):

What are your favorite books?

I like the works of Kafka, Dostoyevsky, Chekhov and Tolstoy.

What is the most important thing in your life?

My family, my friends, my job, my studies.

自主代理的空间正在飞速发展。AutoGen 是来自该领域的最多的架构之一。在这个领域绝对值得追踪。

文章来源:https://medium.com/analytics-vidhya/microsoft-autogen-using-open-source-models-97cba96b0f75

欢迎关注ATYUN官方公众号

商务合作及内容投稿请联系邮箱:bd@atyun.com

上一篇

如何使用和谐搜索算法进行优化

热门企业

热门职位

写评论取消

回复取消