使用Python从头开始构建百万参数LLM

在本文中,我将尝试仅用230万参数制作一个LLM,有趣的是我们不需要一张高端的GPU。我们将遵循LLaMA 1论文的方法来指导我们。我们将保持简单并使用基础的数据集,这样你就能看到创建自己的百万参数级LLM是多么容易。

先决条件

确保你具有面向对象编程(OOP)和神经网络(NN)的基本理解。熟悉PyTorch在编码时也会有所帮助。(自行查找)

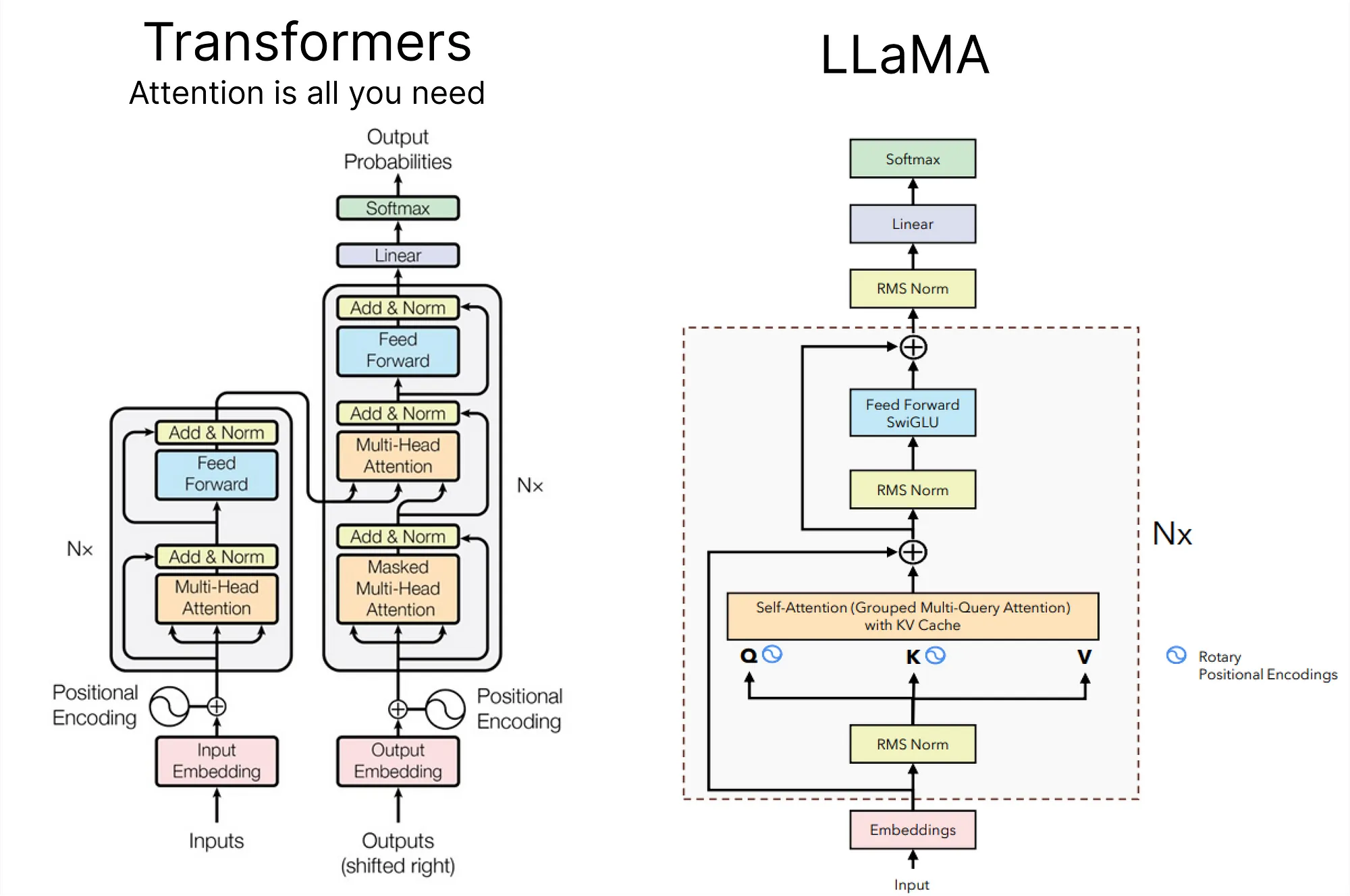

在深入研究使用LLaMA方法创建我们自己的LLM之前,理解LLaMA的架构至关重要。下面是vanilla transformer和LLaMA之间的对比图。

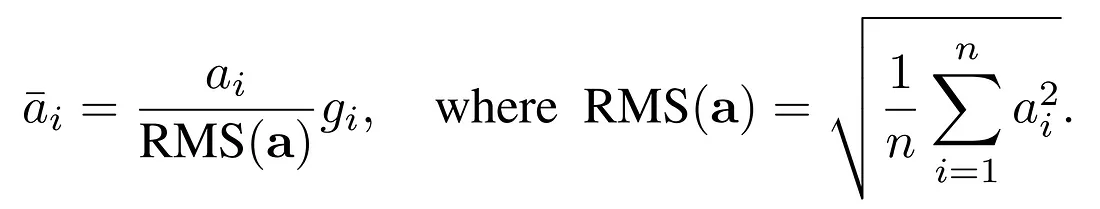

使用RMSNorm进行预规范化:

在LLaMA方法中,采用了一种名为RMSNorm的技术,用于规范化每个变换器子层的输入。这种方法受到了GPT-3的启发,旨在优化与层规范化(Layer Normalization)相关的计算成本。RMSNorm提供了与层规范化相似的性能,但显著减少了运行时间(降低了7%∼64%)。

它通过强调重新缩放不变性和根据均方根(RMS)统计量调节总输入值来实现这一点。主要动机是简化LayerNorm,去除平均统计量。

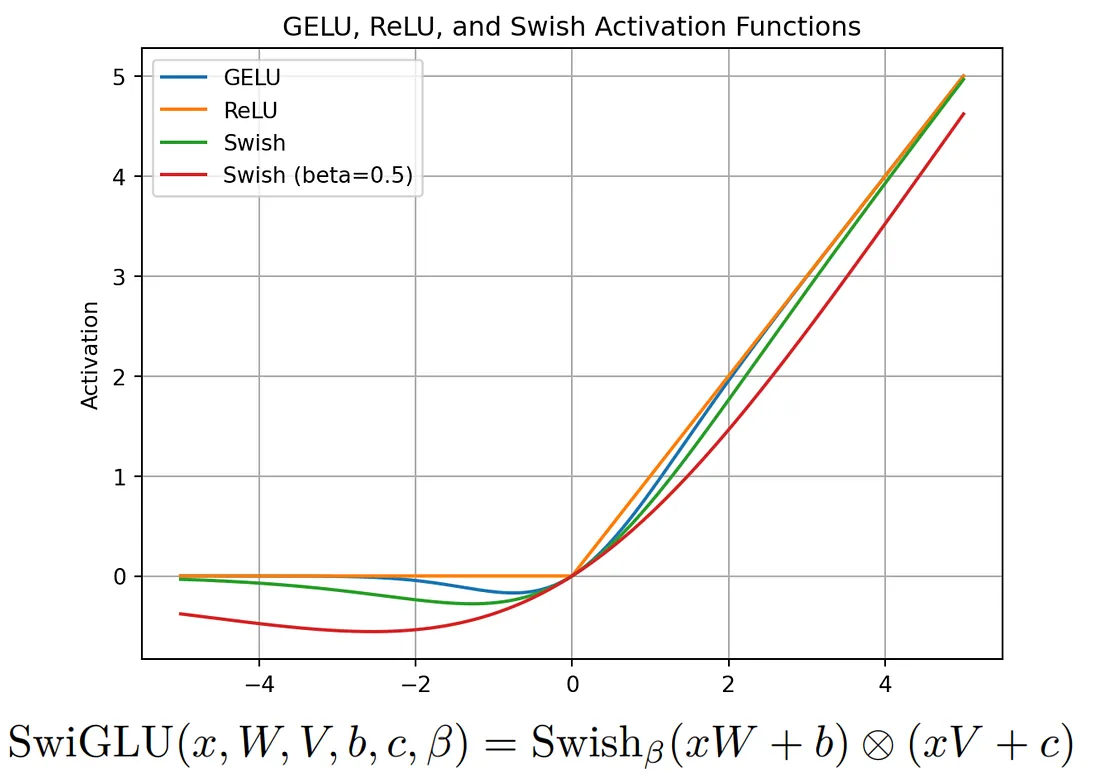

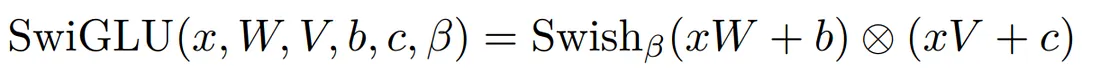

SwiGLU激活函数:

LLaMA介绍了SwiGLU激活函数,其灵感来自于PaLM。要理解SwiGLU,首先需要掌握Swish激活函数。SwiGLU扩展了Swish,并涉及一个定制层,该层带有一个密集网络来分割和乘以输入激活值。

旋转嵌入(RoPE):

旋转嵌入,或称RoPE,是在LLaMA中使用的一种位置嵌入类型。它通过旋转矩阵对绝对位置信息进行编码,并在自注意力公式中自然包含了明确的相对位置依赖性。RoPE提供了若干优势,如能够扩展到不同的序列长度以及随着相对距离的增加而下降的词元间依赖性。

这是通过与旋转矩阵相乘来编码相对位置而实现的,从而导致相对距离的衰减——这对于自然语言编码是一个理想的特性。

除了这些概念之外,LLaMA 论文还介绍了其他重要的方法,包括使用带有特定参数的 AdamW 优化器、在 xformers 库中可用的高效实现,如因果多头注意力运算子,以及为了在反向传播过程中优化计算而手动实现的变换器层的后向函数。

准备阶段

在这个项目中,我们将使用一系列Python库,现在让我们导入它们:

# PyTorch for implementing LLM (No GPU)

import torch

# Neural network modules and functions from PyTorch

from torch import nn

from torch.nn import functional as F

# NumPy for numerical operations

import numpy as np

# Matplotlib for plotting Loss etc.

from matplotlib import pyplot as plt

# Time module for tracking execution time

import time

# Pandas for data manipulation and analysis

import pandas as pd

# urllib for handling URL requests (Downloading Dataset)

import urllib.request

此外,我正在创建一个配置对象来存储模型参数。

# Configuration object for model parameters

MASTER_CONFIG = {

# Adding parameters later

}

这种方法保持了灵活性,允许在未来根据需要添加更多的参数。

数据预处理

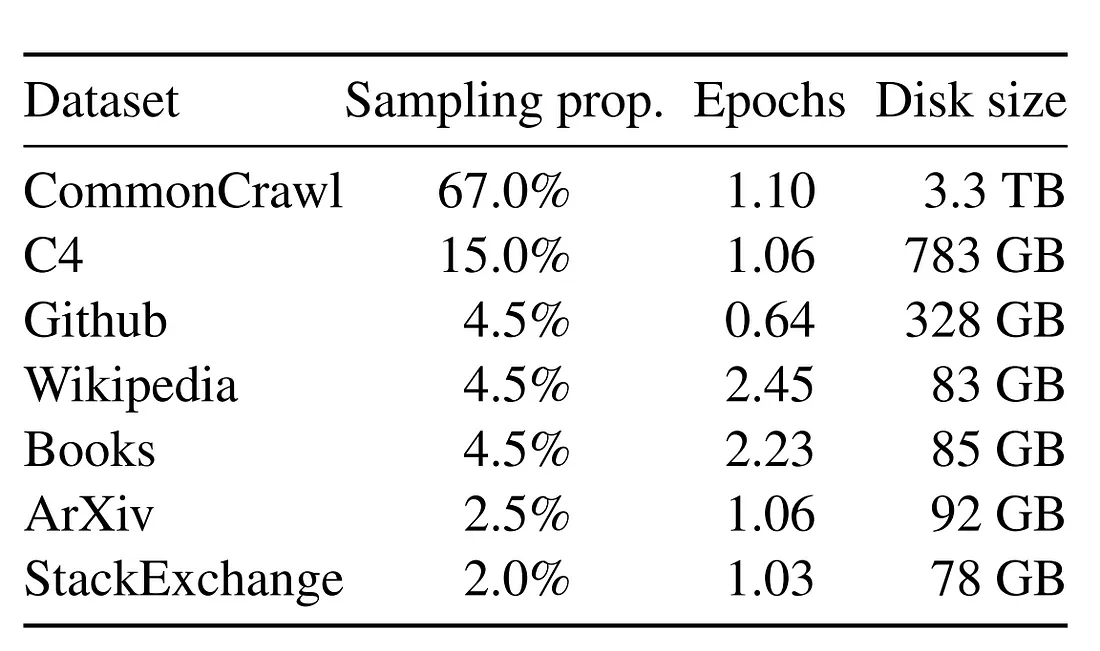

在最初的LLaMA论文中,使用了多种开源数据集来训练和评估模型。

不幸的是,对于小型项目来说,使用大量数据集可能是不切实际的。因此,对于我们的实施,我们将采取一种更谨慎的方法,通过创建一个大幅缩小版的LLaMA。

鉴于没有访问大量数据的限制,我们将专注于使用TinyShakespeare数据集训练一个简化版的LLaMA。

虽然LLaMA是在一个包含1.4万亿令牌的大型数据集上训练的,我们的数据集TinyShakespeare大约包含100万字符。

首先,让我们通过下载来获取我们的数据集:

# The URL of the raw text file on GitHub

url = "https://raw.githubusercontent.com/karpathy/char-rnn/master/data/tinyshakespeare/input.txt"

# The file name for local storage

file_name = "tinyshakespeare.txt"

# Execute the download

urllib.request.urlretrieve(url, file_name)

这个Python脚本从指定的URL获取tinyshakespeare数据集,并将其以“tinyshakespeare.txt”文件名保存在本地。

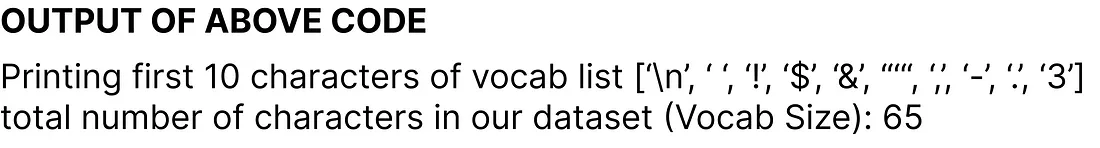

接下来,让我们确定词汇量大小,这代表我们数据集中独特字符的数量。以下是代码片段:

# Read the content of the dataset

lines = open("tinyshakespeare.txt", 'r').read()

# Create a sorted list of unique characters in the dataset

vocab = sorted(list(set(lines)))

# Display the first 10 characters in the vocabulary list

print('Printing the first 10 characters of the vocab list:', vocab[:10])

# Output the total number of characters in our dataset (Vocabulary Size)

print('Total number of characters in our dataset (Vocabulary Size):', len(vocab))

现在,我们正在创建整数到字符(itos)以及字符到整数(stoi)之间的映射。以下是代码:

# Mapping integers to characters (itos)

itos = {i: ch for i, ch in enumerate(vocab)}

# Mapping characters to integers (stoi)

stoi = {ch: i for i, ch in enumerate(vocab)}

在原始的LLaMA论文中,使用了来自谷歌的SentencePiece字节对编码分词器。然而,为了简单起见,我们将选择一个基础的字符级分词器。让我们创建编码和解码函数,稍后我们将应用这些函数到我们的数据集上:

# Encode function: Converts a string to a list of integers using the mapping stoi

def encode(s):

return [stoi[ch] for ch in s]

# Decode function: Converts a list of integers back to a string using the mapping itos

def decode(l):

return ''.join([itos[i] for i in l])

# Example: Encode the string "hello" and then decode the result

decode(encode("morning"))

最后一行输出"morning",确认了编码和解码功能的正确性。

我们现在正在将我们的数据集转换为一个torch张量,并指定其数据类型,以便使用PyTorch进行进一步的操作。

# Convert the dataset into a torch tensor with specified data type (dtype)

dataset = torch.tensor(encode(lines), dtype=torch.int8)

# Display the shape of the resulting tensor

print(dataset.shape)

输出torch.Size([1115394])表明我们的数据集大约包含一百万个令牌。值得注意的是,这比LLaMA数据集要小得多,后者包含了1.4万亿个令牌。

我们将创建一个负责将我们的数据集分割成训练集、验证集或测试集的函数。在机器学习或深度学习项目中,这种分割对于开发和评估模型至关重要,复制大型语言模型(LLM)方法时,同样的原则也适用于此。

# Function to get batches for training, validation, or testing

def get_batches(data, split, batch_size, context_window, config=MASTER_CONFIG):

# Split the dataset into training, validation, and test sets

train = data[:int(.8 * len(data))]

val = data[int(.8 * len(data)): int(.9 * len(data))]

test = data[int(.9 * len(data)):]

# Determine which split to use

batch_data = train

if split == 'val':

batch_data = val

if split == 'test':

batch_data = test

# Pick random starting points within the data

ix = torch.randint(0, batch_data.size(0) - context_window - 1, (batch_size,))

# Create input sequences (x) and corresponding target sequences (y)

x = torch.stack([batch_data[i:i+context_window] for i in ix]).long()

y = torch.stack([batch_data[i+1:i+context_window+1] for i in ix]).long()

return x, y

既然我们的分割函数已经定义好了,让我们来确定这一过程中两个关键的参数:

# Update the MASTER_CONFIG with batch_size and context_window parameters

MASTER_CONFIG.update({

'batch_size': 8, # Number of batches to be processed at each random split

'context_window': 16 # Number of characters in each input (x) and target (y) sequence of each batch

})

batch_size 决定了在每个随机分割中处理多少个批次,而 context_window 指定了每个批次中每个输入(x)和目标(y)序列中的字符数量。

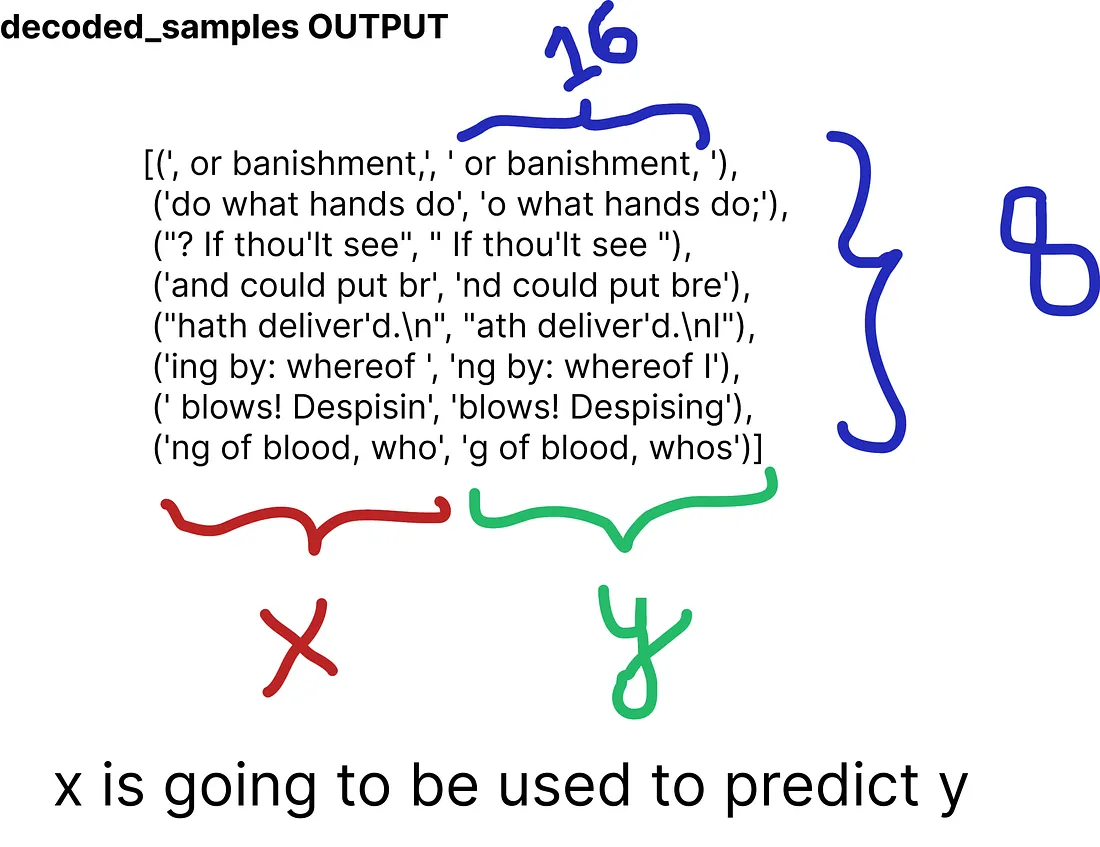

让我们从我们数据集的第8个批次和16个字符的上下文窗口中的训练分割中打印一个随机样本:

# Obtain batches for training using the specified batch size and context window

xs, ys = get_batches(dataset, 'train', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Decode the sequences to obtain the corresponding text representations

decoded_samples = [(decode(xs[i].tolist()), decode(ys[i].tolist())) for i in range(len(xs))]

# Print the random sample

print(decoded_samples)

评估策略

现在,我们准备创建一个专门用于评估我们自创的LLaMA架构的函数。之所以在定义实际模型方法之前进行这一步骤,是为了在训练过程中能够持续进行评估。

@torch.no_grad() # Don't compute gradients for this function

def evaluate_loss(model, config=MASTER_CONFIG):

# Placeholder for the evaluation results

out = {}

# Set the model to evaluation mode

model.eval()

# Iterate through training and validation splits

for split in ["train", "val"]:

# Placeholder for individual losses

losses = []

# Generate 10 batches for evaluation

for _ in range(10):

# Get input sequences (xb) and target sequences (yb)

xb, yb = get_batches(dataset, split, config['batch_size'], config['context_window'])

# Perform model inference and calculate the loss

_, loss = model(xb, yb)

# Append the loss to the list

losses.append(loss.item())

# Calculate the mean loss for the split and store it in the output dictionary

out[split] = np.mean(losses)

# Set the model back to training mode

model.train()

return out

我们使用损失作为度量标准来评估模型在训练迭代过程中的表现。我们的函数会遍历训练和验证分割,计算每个分割的10个批次的平均损失,最后返回结果。然后将模型重新设置为训练模式,使用model.train()。

设置基础神经网络模型

我们正在构建一个基础的神经网络,我们将会在之后使用LLaMa技术对其进行改进。

# Definition of a basic neural network class

class SimpleBrokenModel(nn.Module):

def __init__(self, config=MASTER_CONFIG):

super().__init__()

self.config = config

# Embedding layer to convert character indices to vectors (vocab size: 65)

self.embedding = nn.Embedding(config['vocab_size'], config['d_model'])

# Linear layers for modeling relationships between features

# (to be updated with SwiGLU activation function as in LLaMA)

self.linear = nn.Sequential(

nn.Linear(config['d_model'], config['d_model']),

nn.ReLU(), # Currently using ReLU, will be replaced with SwiGLU as in LLaMA

nn.Linear(config['d_model'], config['vocab_size']),

)

# Print the total number of model parameters

print("Model parameters:", sum([m.numel() for m in self.parameters()]))

在当前的架构中,嵌入层的词汇量为65,代表了我们数据集中的字符。鉴于这是我们的基础模型,我们在线性层中使用ReLU作为激活函数;然而,这将在后期被SwiGLU替换,如同在LLaMA中使用的那样。

为了为我们的基础模型创建一个前向传递,我们必须在我们的神经网络模型中定义一个forward函数。

# Definition of a basic neural network class

class SimpleBrokenModel(nn.Module):

def __init__(self, config=MASTER_CONFIG):

# Rest of the code

...

# Forward pass function for the base model

def forward(self, idx, targets=None):

# Embedding layer converts character indices to vectors

x = self.embedding(idx)

# Linear layers for modeling relationships between features

a = self.linear(x)

# Apply softmax activation to obtain probability distribution

logits = F.softmax(a, dim=-1)

# If targets are provided, calculate and return the cross-entropy loss

if targets is not None:

# Reshape logits and targets for cross-entropy calculation

loss = F.cross_entropy(logits.view(-1, self.config['vocab_size']), targets.view(-1))

return logits, loss

# If targets are not provided, return the logits

else:

return logits

# Print the total number of model parameters

print("Model parameters:", sum([m.numel() for m in self.parameters()]))

这个前向传播函数以字符索引(idx)为输入,应用嵌入层,然后将结果通过线性层,应用softmax激活函数以获得概率分布(逻辑斯蒂分布)。如果提供了目标值,它会计算交叉熵损失并返回逻辑斯蒂分布和损失值。如果没有提供目标值,它只返回logits。

要实例化这个模型,我们可以直接调用类并打印我们简单神经网络模型中的总参数数量。我们已经设置我们线性层的维度为128,在我们的配置对象中指定了这个值。

# Update MASTER_CONFIG with the dimension of linear layers (128)

MASTER_CONFIG.update({

'd_model': 128,

})

# Instantiate the SimpleBrokenModel using the updated MASTER_CONFIG

model = SimpleBrokenModel(MASTER_CONFIG)

# Print the total number of parameters in the model

print("Total number of parameters in the Simple Neural Network Model:", sum([m.numel() for m in model.parameters()]))

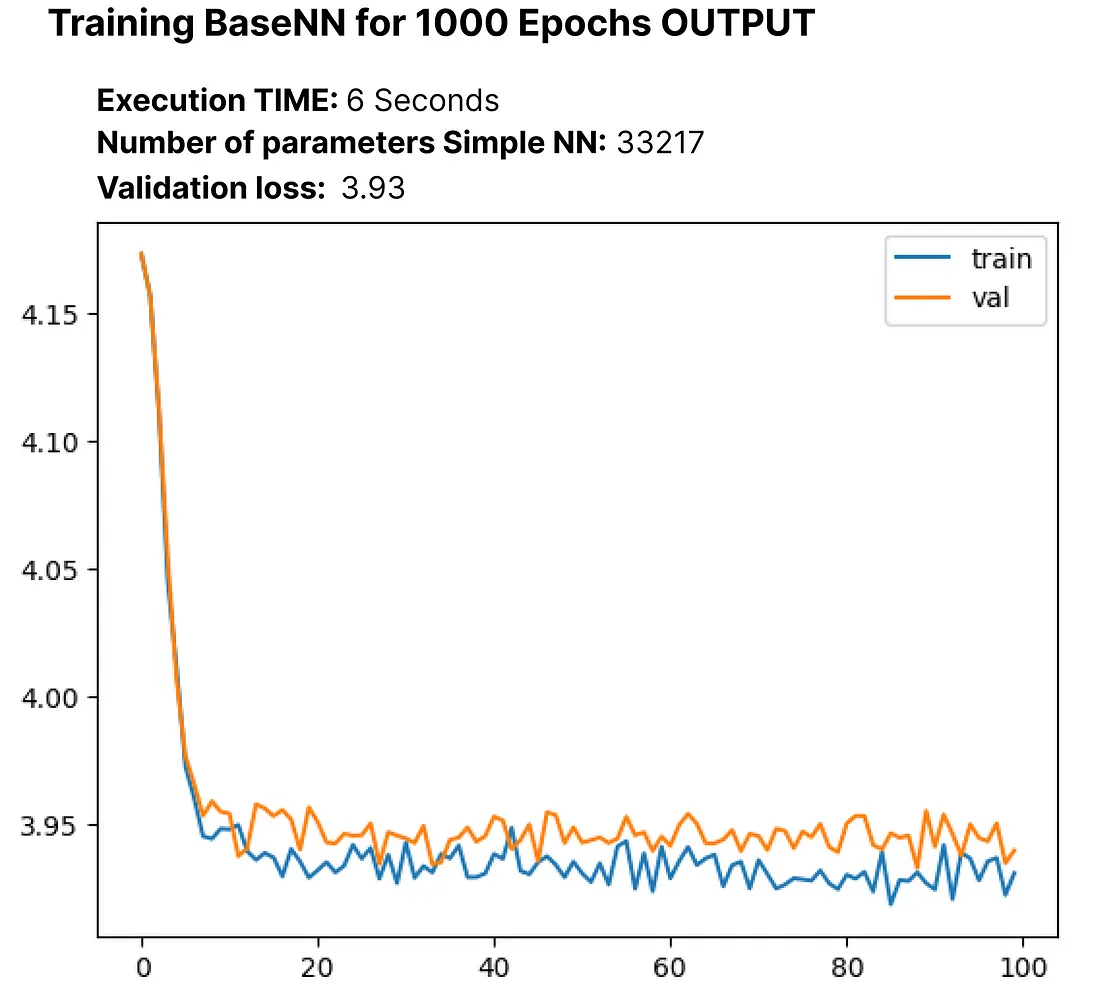

我们的简单神经网络模型大约包含33,000个参数。

同样,为了计算逻辑回归值和损失,我们只需将我们分割好的数据集输入到我们的模型中:

# Obtain batches for training using the specified batch size and context window

xs, ys = get_batches(dataset, 'train', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Calculate logits and loss using the model

logits, loss = model(xs, ys)

为了训练我们的基础模型并记录其性能,我们需要指定一些参数。我们总共训练1000个epoch。将批量大小从8增加到32,并将log_interval设置为10,这意味着代码将每10个批次打印或记录训练进度的信息。对于优化,我们将使用Adam优化器。

# Update MASTER_CONFIG with training parameters

MASTER_CONFIG.update({

'epochs': 1000, # Number of training epochs

'log_interval': 10, # Log information every 10 batches during training

'batch_size': 32, # Increase batch size to 32

})

# Instantiate the SimpleBrokenModel with updated configuration

model = SimpleBrokenModel(MASTER_CONFIG)

# Define the Adam optimizer for model parameters

optimizer = torch.optim.Adam(

model.parameters(), # Pass the model parameters to the optimizer

)

让我们执行训练过程并捕获我们基础模型的损失,包括总参数数量。

# Function to perform training

def train(model, optimizer, scheduler=None, config=MASTER_CONFIG, print_logs=False):

# Placeholder for storing losses

losses = []

# Start tracking time

start_time = time.time()

# Iterate through epochs

for epoch in range(config['epochs']):

# Zero out gradients

optimizer.zero_grad()

# Obtain batches for training

xs, ys = get_batches(dataset, 'train', config['batch_size'], config['context_window'])

# Forward pass through the model to calculate logits and loss

logits, loss = model(xs, targets=ys)

# Backward pass and optimization step

loss.backward()

optimizer.step()

# If a learning rate scheduler is provided, adjust the learning rate

if scheduler:

scheduler.step()

# Log progress every specified interval

if epoch % config['log_interval'] == 0:

# Calculate batch time

batch_time = time.time() - start_time

# Evaluate loss on validation set

x = evaluate_loss(model)

# Store the validation loss

losses += [x]

# Print progress logs if specified

if print_logs:

print(f"Epoch {epoch} | val loss {x['val']:.3f} | Time {batch_time:.3f} | ETA in seconds {batch_time * (config['epochs'] - epoch)/config['log_interval'] :.3f}")

# Reset the timer

start_time = time.time()

# Print learning rate if a scheduler is provided

if scheduler:

print("lr: ", scheduler.get_lr())

# Print the final validation loss

print("Validation loss: ", losses[-1]['val'])

# Plot the training and validation loss curves

return pd.DataFrame(losses).plot()

# Execute the training process

train(model, optimizer)

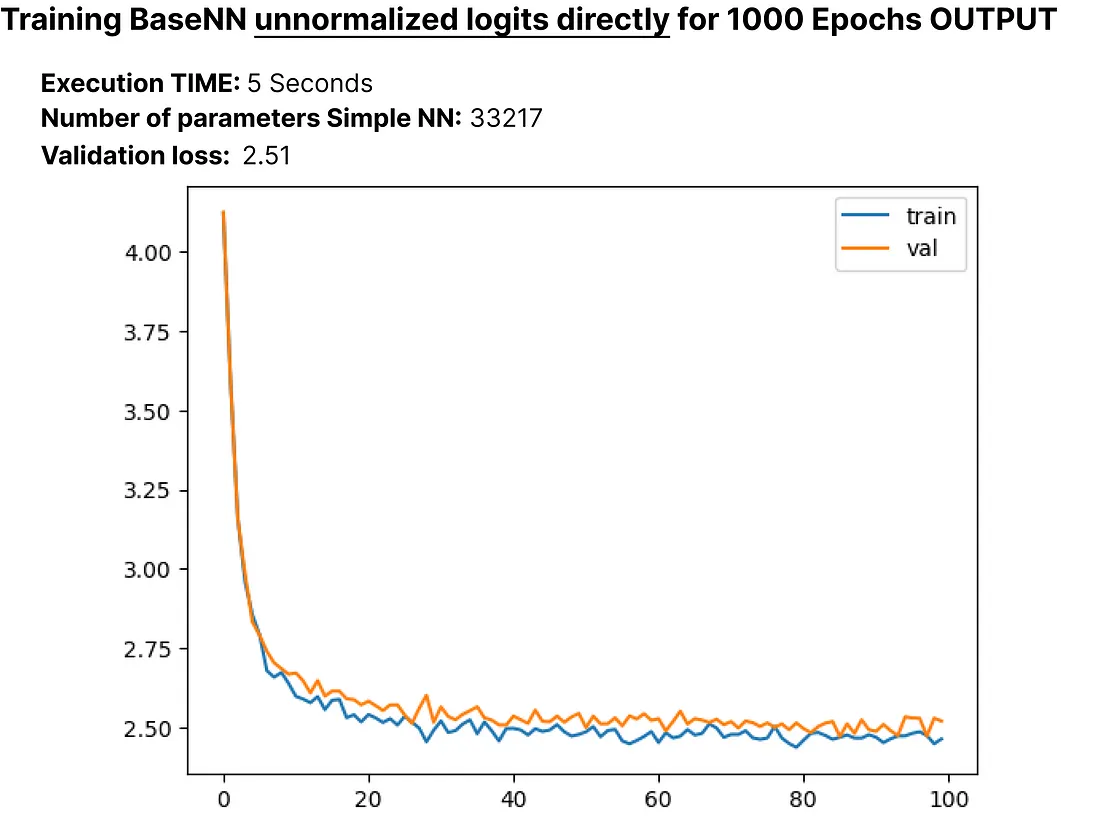

在训练之前,交叉熵损失的初始值为4.17,经过1000个周期后,它减少到了3.93。在这个背景下,交叉熵反映了选择错误单词的可能性。

我们的模型中包含了一个softmax层,该层将一系列数字转化为概率分布。我们来使用内置的F.cross_entropy函数,需要直接传递未正规化的logits。因此,我们将相应地修改我们的模型。

# Modified SimpleModel class without softmax layer

class SimpleModel(nn.Module):

def __init__(self, config):

# Rest of the code

...

def forward(self, idx, targets=None):

# Embedding layer converts character indices to vectors

x = self.embedding(idx)

# Linear layers for modeling relationships between features

logits = self.linear(x)

# If targets are provided, calculate and return the cross-entropy loss

if targets is not None:

# Rest of the code

...

让我们重新创建更新后的SimpleModel,并对其进行1000次迭代的训练,以观察是否有任何变化:

# Create the updated SimpleModel

model = SimpleModel(MASTER_CONFIG)

# Obtain batches for training

xs, ys = get_batches(dataset, 'train', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Calculate logits and loss using the model

logits, loss = model(xs, ys)

# Define the Adam optimizer for model parameters

optimizer = torch.optim.Adam(model.parameters())

# Train the model for 100 epochs

train(model, optimizer)

在将损失降至2.51后,让我们探索拥有大约33,000个参数的语言模型在推理时是如何生成文本的。我们将创建一个“generate”函数,稍后在复制LLaMA时会用到这个函数。

# Generate function for text generation using the trained model

def generate(model, config=MASTER_CONFIG, max_new_tokens=30):

idx = torch.zeros(5, 1).long()

for _ in range(max_new_tokens):

# Call the model

logits = model(idx[:, -config['context_window']:])

last_time_step_logits = logits[

:, -1, :

] # all the batches (1), last time step, all the logits

p = F.softmax(last_time_step_logits, dim=-1) # softmax to get probabilities

idx_next = torch.multinomial(

p, num_samples=1

) # sample from the distribution to get the next token

idx = torch.cat([idx, idx_next], dim=-1) # append to the sequence

return [decode(x) for x in idx.tolist()]

# Generate text using the trained model

generate(model)

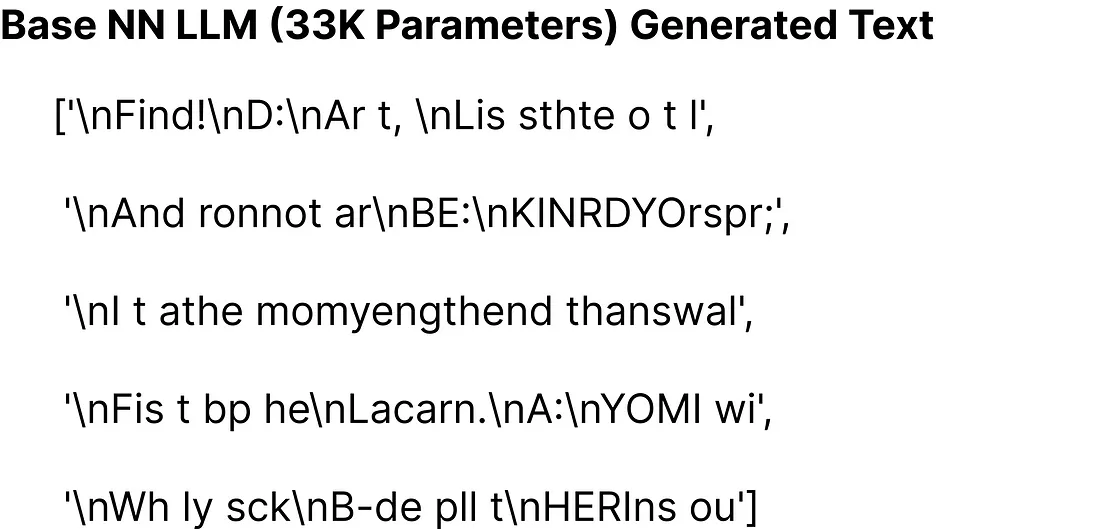

生成的文本在我们的基础模型上看起来并不理想,这个模型大约只有33K参数。

复制LLaMA架构

在本文的前面部分,我们覆盖了一些基础概念,现在,我们将这些概念集成到我们的基础模型中。LLaMA对原始变换器(Transformer)做了三个架构上的修改:

- RMSNorm用于预归一化

- 旋转嵌入(Rotary embeddings)

- SwiGLU激活函数

我们将逐一将这些修改融合到我们的基础模型中,迭代并在它们之上构建。

RMSNorm用于预归一化:

我们定义了一个具有以下功能的RMSNorm函数:

class RMSNorm(nn.Module):

def __init__(self, layer_shape, eps=1e-8, bias=False):

super(RMSNorm, self).__init__()

# Registering a learnable parameter 'scale' as a parameter of the module

self.register_parameter("scale", nn.Parameter(torch.ones(layer_shape)))

def forward(self, x):

"""

Assumes shape is (batch, seq_len, d_model)

"""

# Calculating the Frobenius norm, RMS = 1/sqrt(N) * Frobenius norm

ff_rms = torch.linalg.norm(x, dim=(1,2)) * x[0].numel() ** -.5

# Normalizing the input tensor 'x' with respect to RMS

raw = x / ff_rms.unsqueeze(-1).unsqueeze(-1)

# Scaling the normalized tensor using the learnable parameter 'scale'

return self.scale[:x.shape[1], :].unsqueeze(0) * raw

我们定义了RMSNorm类。在初始化期间,它会注册一个尺度参数。在前向传递中,它计算输入张量的弗罗贝尼乌斯范数,然后对张量进行归一化处理。最后,张量会被注册的尺度参数缩放。这个函数被设计用于在LLaMA中替换LayerNorm操作。

现在是时候将LLaMA的第一个实施概念,即RMSNorm,整合到我们简单的神经网络模型中了。以下是更新后的代码:

# Define the SimpleModel_RMS with RMSNorm

class SimpleModel_RMS(nn.Module):

def __init__(self, config):

super().__init__()

self.config = config

# Embedding layer to convert character indices to vectors

self.embedding = nn.Embedding(config['vocab_size'], config['d_model'])

# RMSNorm layer for pre-normalization

self.rms = RMSNorm((config['context_window'], config['d_model']))

# Linear layers for modeling relationships between features

self.linear = nn.Sequential(

# Rest of the code

...

)

# Print the total number of model parameters

print("Model parameters:", sum([m.numel() for m in self.parameters()]))

def forward(self, idx, targets=None):

# Embedding layer converts character indices to vectors

x = self.embedding(idx)

# RMSNorm pre-normalization

x = self.rms(x)

# Linear layers for modeling relationships between features

logits = self.linear(x)

if targets is not None:

# Rest of the code

...

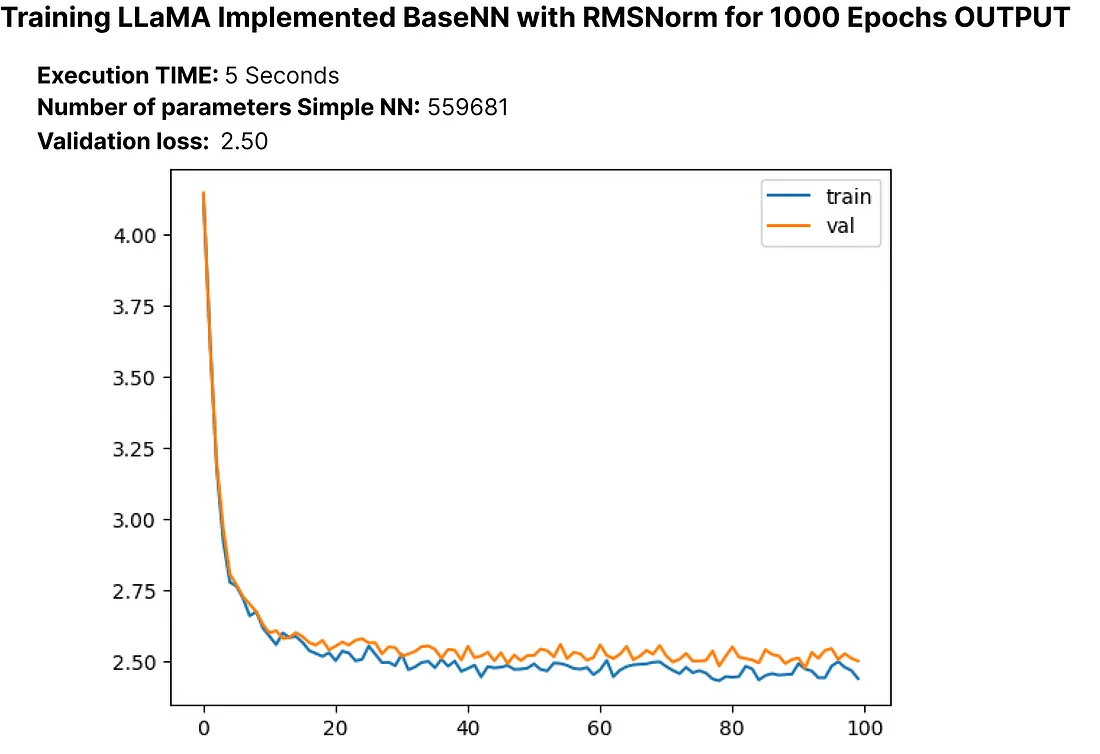

"让我们执行修改后的NN模型,使用RMSNorm,并观察模型中更新后的参数数量以及损失:"

# Create an instance of SimpleModel_RMS

model = SimpleModel_RMS(MASTER_CONFIG)

# Obtain batches for training

xs, ys = get_batches(dataset, 'train', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Calculate logits and loss using the model

logits, loss = model(xs, ys)

# Define the Adam optimizer for model parameters

optimizer = torch.optim.Adam(model.parameters())

# Train the model

train(model, optimizer)

验证损失经历了小幅度的减少,我们更新后的大型语言模型(LLM)的参数现在总计大约55,000个。

旋转嵌入:

接下来,我们将实施旋转位置嵌入。在RoPE中,作者建议通过旋转嵌入来嵌入序列中一个标记的位置,在每个位置应用不同的旋转。让我们创建一个函数,来模仿RoPE实际论文中的实现方式:

def get_rotary_matrix(context_window, embedding_dim):

# Initialize a tensor for the rotary matrix with zeros

R = torch.zeros((context_window, embedding_dim, embedding_dim), requires_grad=False)

# Loop through each position in the context window

for position in range(context_window):

# Loop through each dimension in the embedding

for i in range(embedding_dim // 2):

# Calculate the rotation angle (theta) based on the position and embedding dimension

theta = 10000. ** (-2. * (i - 1) / embedding_dim)

# Calculate the rotated matrix elements using sine and cosine functions

m_theta = position * theta

R[position, 2 * i, 2 * i] = np.cos(m_theta)

R[position, 2 * i, 2 * i + 1] = -np.sin(m_theta)

R[position, 2 * i + 1, 2 * i] = np.sin(m_theta)

R[position, 2 * i + 1, 2 * i + 1] = np.cos(m_theta)

return R

我们基于指定的上下文窗口和嵌入维度生成一个旋转矩阵,遵循提出的RoPE实现。

正如你可能熟悉转换器的架构,其涉及到注意力头部,我们在复制LLaMA时同样需要创建注意力头部。首先,让我们使用之前为旋转嵌入开发的get_rotary_matrix函数,创建一个单独的遮蔽注意力头部。

class RoPEAttentionHead(nn.Module):

def __init__(self, config):

super().__init__()

self.config = config

# Linear transformation for query

self.w_q = nn.Linear(config['d_model'], config['d_model'], bias=False)

# Linear transformation for key

self.w_k = nn.Linear(config['d_model'], config['d_model'], bias=False)

# Linear transformation for value

self.w_v = nn.Linear(config['d_model'], config['d_model'], bias=False)

# Obtain rotary matrix for positional embeddings

self.R = get_rotary_matrix(config['context_window'], config['d_model'])

def get_rotary_matrix(context_window, embedding_dim):

# Generate rotational matrix for RoPE

R = torch.zeros((context_window, embedding_dim, embedding_dim), requires_grad=False)

for position in range(context_window):

for i in range(embedding_dim//2):

# Rest of the code

...

return R

def forward(self, x, return_attn_weights=False):

# x: input tensor of shape (batch, sequence length, dimension)

b, m, d = x.shape # batch size, sequence length, dimension

# Linear transformations for Q, K, and V

q = self.w_q(x)

k = self.w_k(x)

v = self.w_v(x)

# Rotate Q and K using the RoPE matrix

q_rotated = (torch.bmm(q.transpose(0, 1), self.R[:m])).transpose(0, 1)

k_rotated = (torch.bmm(k.transpose(0, 1), self.R[:m])).transpose(0, 1)

# Perform scaled dot-product attention

activations = F.scaled_dot_product_attention(

q_rotated, k_rotated, v, dropout_p=0.1, is_causal=True

)

if return_attn_weights:

# Create a causal attention mask

attn_mask = torch.tril(torch.ones((m, m)), diagonal=0)

# Calculate attention weights and add causal mask

attn_weights = torch.bmm(q_rotated, k_rotated.transpose(1, 2)) / np.sqrt(d) + attn_mask

attn_weights = F.softmax(attn_weights, dim=-1)

return activations, attn_weights

return activations

现在我们已经有了一个返回注意力权重的单一遮蔽注意力头,下一步是创建一个多头注意力机制。

class RoPEMaskedMultiheadAttention(nn.Module):

def __init__(self, config):

super().__init__()

self.config = config

# Create a list of RoPEMaskedAttentionHead instances as attention heads

self.heads = nn.ModuleList([

RoPEMaskedAttentionHead(config) for _ in range(config['n_heads'])

])

self.linear = nn.Linear(config['n_heads'] * config['d_model'], config['d_model']) # Linear layer after concatenating heads

self.dropout = nn.Dropout(.1) # Dropout layer

def forward(self, x):

# x: input tensor of shape (batch, sequence length, dimension)

# Process each attention head and concatenate the results

heads = [h(x) for h in self.heads]

x = torch.cat(heads, dim=-1)

# Apply linear transformation to the concatenated output

x = self.linear(x)

# Apply dropout

x = self.dropout(x)

return x

原始论文在它们较小的7b大型语言模型(LLM)变体中使用了32个头,但由于限制,我们的方法将使用8个头。

# Update the master configuration with the number of attention heads

MASTER_CONFIG.update({

'n_heads': 8,

})

现在我们已经实施了旋转嵌入(Rotational Embedding)和多头注意力(Multi-head Attention),接下来让我们使用更新后的代码重写我们的RMSNorm神经网络模型。我们将测试其性能,计算损失,并检查参数数量。我们将这个更新后的模型称为“RopeModel”。

class RopeModel(nn.Module):

def __init__(self, config):

super().__init__()

self.config = config

# Embedding layer for input tokens

self.embedding = nn.Embedding(config['vocab_size'], config['d_model'])

# RMSNorm layer for pre-normalization

self.rms = RMSNorm((config['context_window'], config['d_model']))

# RoPEMaskedMultiheadAttention layer

self.rope_attention = RoPEMaskedMultiheadAttention(config)

# Linear layer followed by ReLU activation

self.linear = nn.Sequential(

nn.Linear(config['d_model'], config['d_model']),

nn.ReLU(),

)

# Final linear layer for prediction

self.last_linear = nn.Linear(config['d_model'], config['vocab_size'])

print("model params:", sum([m.numel() for m in self.parameters()]))

def forward(self, idx, targets=None):

# idx: input indices

x = self.embedding(idx)

# One block of attention

x = self.rms(x) # RMS pre-normalization

x = x + self.rope_attention(x)

x = self.rms(x) # RMS pre-normalization

x = x + self.linear(x)

logits = self.last_linear(x)

if targets is not None:

loss = F.cross_entropy(logits.view(-1, self.config['vocab_size']), targets.view(-1))

return logits, loss

else:

return logits

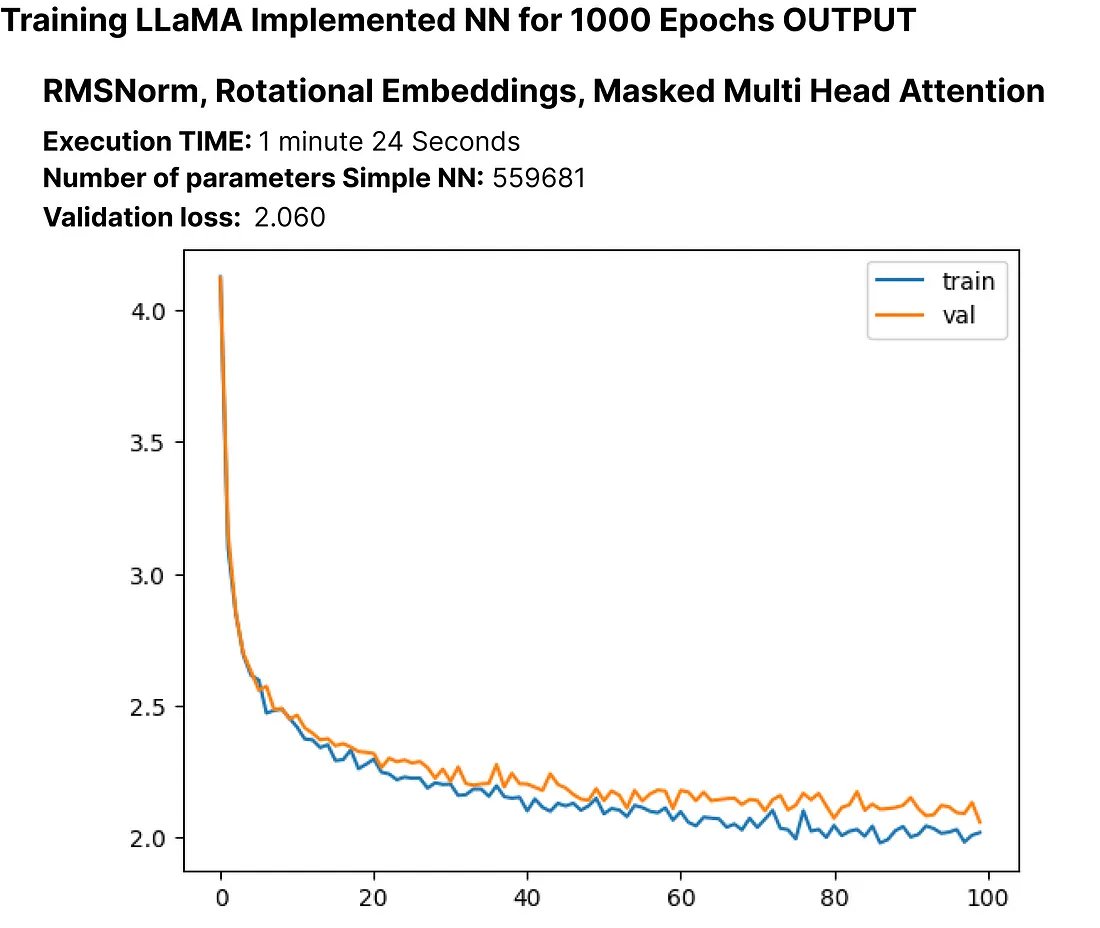

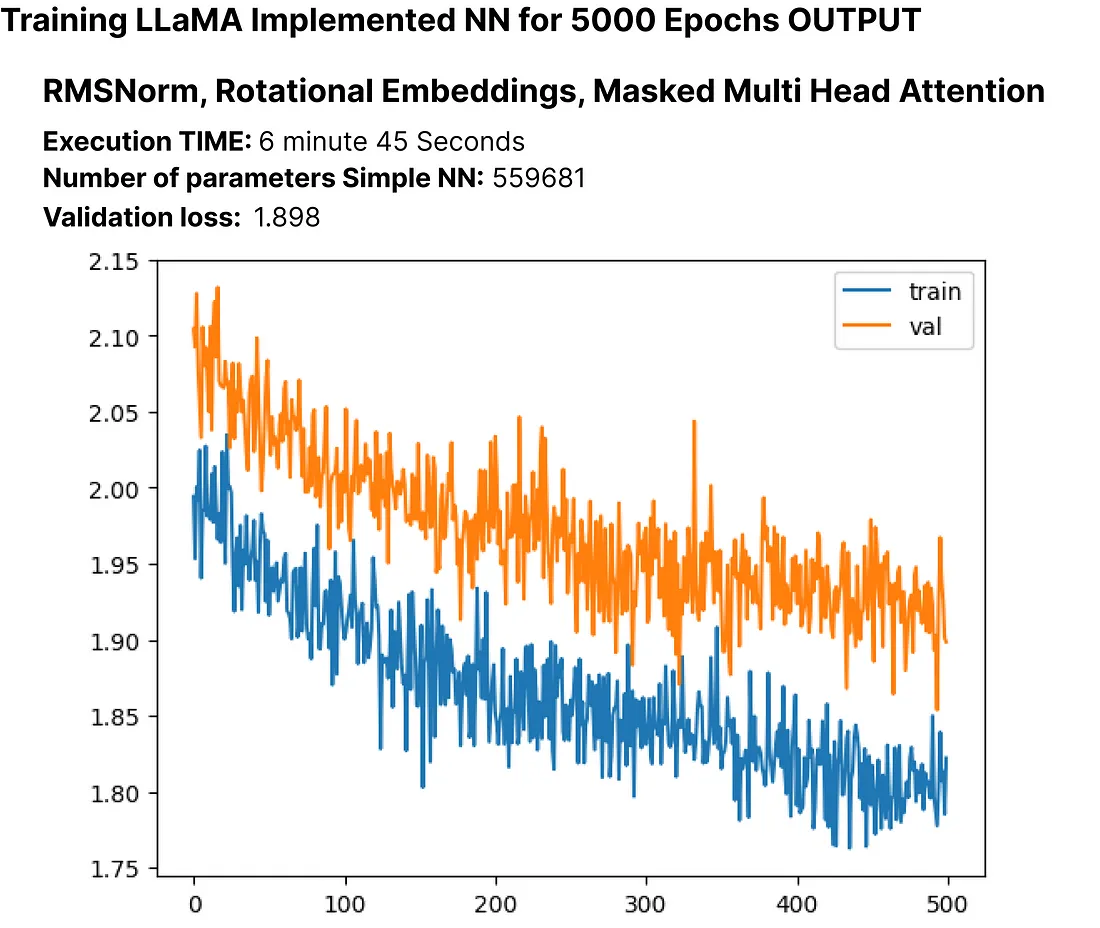

让我们执行修改后的神经网络模型,该模型采用了RMSNorm、旋转嵌入和遮蔽多头注意力机制,以观察模型中参数数量的更新,以及损失情况。

# Create an instance of RopeModel (RMSNorm, RoPE, Multi-Head)

model = RopeModel(MASTER_CONFIG)

# Obtain batches for training

xs, ys = get_batches(dataset, 'train', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Calculate logits and loss using the model

logits, loss = model(xs, ys)

# Define the Adam optimizer for model parameters

optimizer = torch.optim.Adam(model.parameters())

# Train the model

train(model, optimizer)

验证损失再次经历了小幅下降,我们更新的LLM的参数现在总计大约为55,000个。

让我们继续训练模型多几个周期,看看我们重建的LLaMA LLM的损失是否会继续下降。

# Updating training configuration with more epochs and a logging interval

MASTER_CONFIG.update({

"epochs": 5000,

"log_interval": 10,

})

# Training the model with the updated configuration

train(model, optimizer)

验证损失持续减少,表明进行更多的训练周期可能导致进一步的损失降低,尽管降低的幅度可能不会很大。

SwiGLU激活函数:

正如之前提到的,LLaMA的创造者使用SwiGLU而非ReLU,所以我们将在我们的代码中实现SwiGLU方程。

class SwiGLU(nn.Module):

""" Paper Link -> https://arxiv.org/pdf/2002.05202v1.pdf """

def __init__(self, size):

super().__init__()

self.config = config # Configuration information

self.linear_gate = nn.Linear(size, size) # Linear transformation for the gating mechanism

self.linear = nn.Linear(size, size) # Linear transformation for the main branch

self.beta = torch.randn(1, requires_grad=True) # Random initialization of the beta parameter

# Using nn.Parameter for beta to ensure it's recognized as a learnable parameter

self.beta = nn.Parameter(torch.ones(1))

self.register_parameter("beta", self.beta)

def forward(self, x):

# Swish-Gated Linear Unit computation

swish_gate = self.linear_gate(x) * torch.sigmoid(self.beta * self.linear_gate(x))

out = swish_gate * self.linear(x) # Element-wise multiplication of the gate and main branch

return out

在Python中实现了SwiGLU方程后,我们需要将其集成到我们修改过的LLaMA语言模型(RopeModel)中。

class RopeModel(nn.Module):

def __init__(self, config):

super().__init__()

self.config = config

# Embedding layer for input tokens

self.embedding = nn.Embedding(config['vocab_size'], config['d_model'])

# RMSNorm layer for pre-normalization

self.rms = RMSNorm((config['context_window'], config['d_model']))

# Multi-head attention layer with RoPE (Rotary Positional Embeddings)

self.rope_attention = RoPEMaskedMultiheadAttention(config)

# Linear layer followed by SwiGLU activation

self.linear = nn.Sequential(

nn.Linear(config['d_model'], config['d_model']),

SwiGLU(config['d_model']), # Adding SwiGLU activation

)

# Output linear layer

self.last_linear = nn.Linear(config['d_model'], config['vocab_size'])

# Printing total model parameters

print("model params:", sum([m.numel() for m in self.parameters()]))

def forward(self, idx, targets=None):

x = self.embedding(idx)

# One block of attention

x = self.rms(x) # RMS pre-normalization

x = x + self.rope_attention(x)

x = self.rms(x) # RMS pre-normalization

x = x + self.linear(x) # Applying SwiGLU activation

logits = self.last_linear(x)

if targets is not None:

# Calculate cross-entropy loss if targets are provided

loss = F.cross_entropy(logits.view(-1, self.config['vocab_size']), targets.view(-1))

return logits, loss

else:

return logits

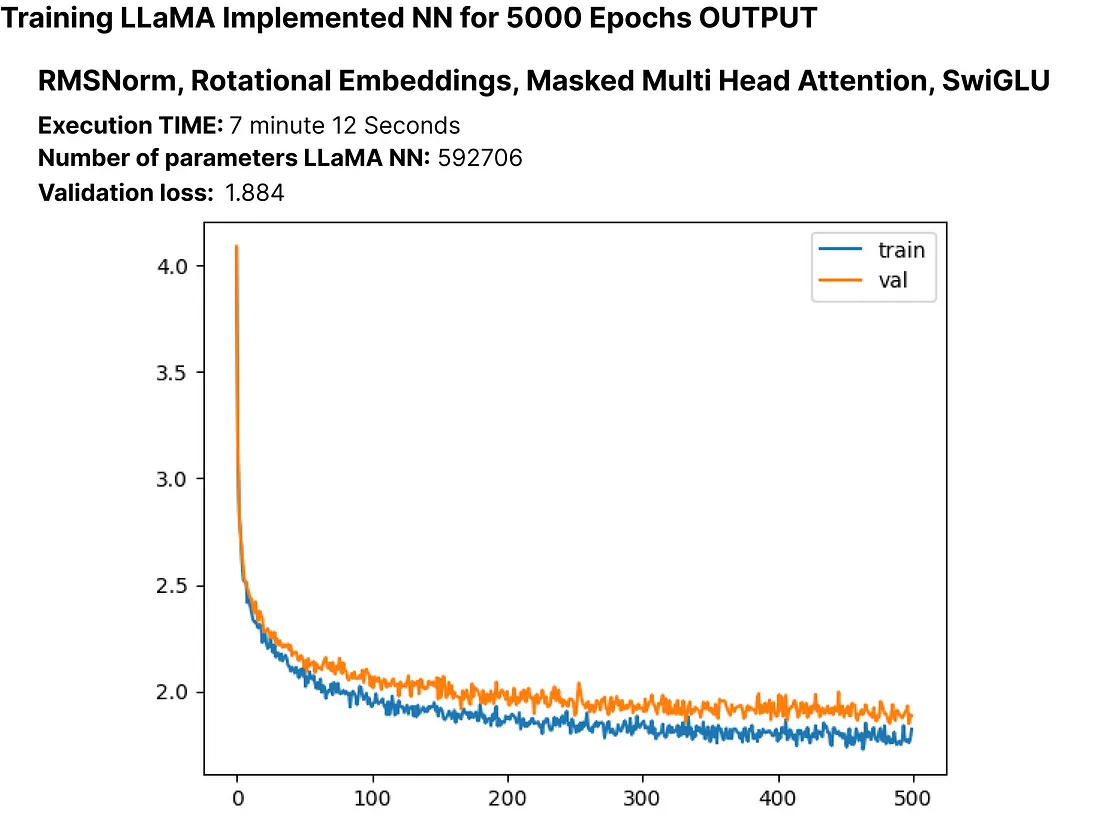

让我们执行修改后的神经网络模型,该模型采用RMSNorm、旋转嵌入、遮蔽多头注意力和SwiGLU,以便观察模型中更新后的参数数量以及损失。

# Create an instance of RopeModel (RMSNorm, RoPE, Multi-Head, SwiGLU)

model = RopeModel(MASTER_CONFIG)

# Obtain batches for training

xs, ys = get_batches(dataset, 'train', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Calculate logits and loss using the model

logits, loss = model(xs, ys)

# Define the Adam optimizer for model parameters

optimizer = torch.optim.Adam(model.parameters())

# Train the model

train(model, optimizer)

校验损失再次经历了一个小幅度的下降,我们更新后的LLM的参数现在总计大约60,000个。

到目前为止,我们已经成功实施了论文的关键组成部分,即RMSNorm、RoPE和SwiGLU。我们观察到这些实现导致损失略微减少。

现在,我们将向我们的LLaMA添加层来检查其对损失的影响。原始论文对于7b版本使用了32层,但我们只使用4层。让我们相应地调整模型设置。

# Update model configurations for the number of layers

MASTER_CONFIG.update({

'n_layers': 4, # Set the number of layers to 4

})

我们先从创建一个单一层开始,以理解它的影响。

# add RMSNorm and residual connection

class LlamaBlock(nn.Module):

def __init__(self, config):

super().__init__()

self.config = config

# RMSNorm layer

self.rms = RMSNorm((config['context_window'], config['d_model']))

# RoPE Masked Multihead Attention layer

self.attention = RoPEMaskedMultiheadAttention(config)

# Feedforward layer with SwiGLU activation

self.feedforward = nn.Sequential(

nn.Linear(config['d_model'], config['d_model']),

SwiGLU(config['d_model']),

)

def forward(self, x):

# one block of attention

x = self.rms(x) # RMS pre-normalization

x = x + self.attention(x) # residual connection

x = self.rms(x) # RMS pre-normalization

x = x + self.feedforward(x) # residual connection

return x

创建一个LlamaBlock类的实例,并将其应用于一个随机张量。

# Create an instance of the LlamaBlock class with the provided configuration

block = LlamaBlock(MASTER_CONFIG)

# Generate a random tensor with the specified batch size, context window, and model dimension

random_input = torch.randn(MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'], MASTER_CONFIG['d_model'])

# Apply the LlamaBlock to the random input tensor

output = block(random_input)

成功创建了单层后,我们现在可以使用它来构建多个层。此外,由于我们已复制了LLaMA语言模型的每个组件,我们将把我们的模型类从“ropemodel”重命名为“Llama”。

class Llama(nn.Module):

def __init__(self, config):

super().__init__()

self.config = config

# Embedding layer for token representations

self.embeddings = nn.Embedding(config['vocab_size'], config['d_model'])

# Sequential block of LlamaBlocks based on the specified number of layers

self.llama_blocks = nn.Sequential(

OrderedDict([(f"llama_{i}", LlamaBlock(config)) for i in range(config['n_layers'])])

)

# Feedforward network (FFN) for final output

self.ffn = nn.Sequential(

nn.Linear(config['d_model'], config['d_model']),

SwiGLU(config['d_model']),

nn.Linear(config['d_model'], config['vocab_size']),

)

# Print total number of parameters in the model

print("model params:", sum([m.numel() for m in self.parameters()]))

def forward(self, idx, targets=None):

# Input token indices are passed through the embedding layer

x = self.embeddings(idx)

# Process the input through the LlamaBlocks

x = self.llama_blocks(x)

# Pass the processed input through the final FFN for output logits

logits = self.ffn(x)

# If targets are not provided, return only the logits

if targets is None:

return logits

# If targets are provided, compute and return the cross-entropy loss

else:

loss = F.cross_entropy(logits.view(-1, self.config['vocab_size']), targets.view(-1))

return logits, loss

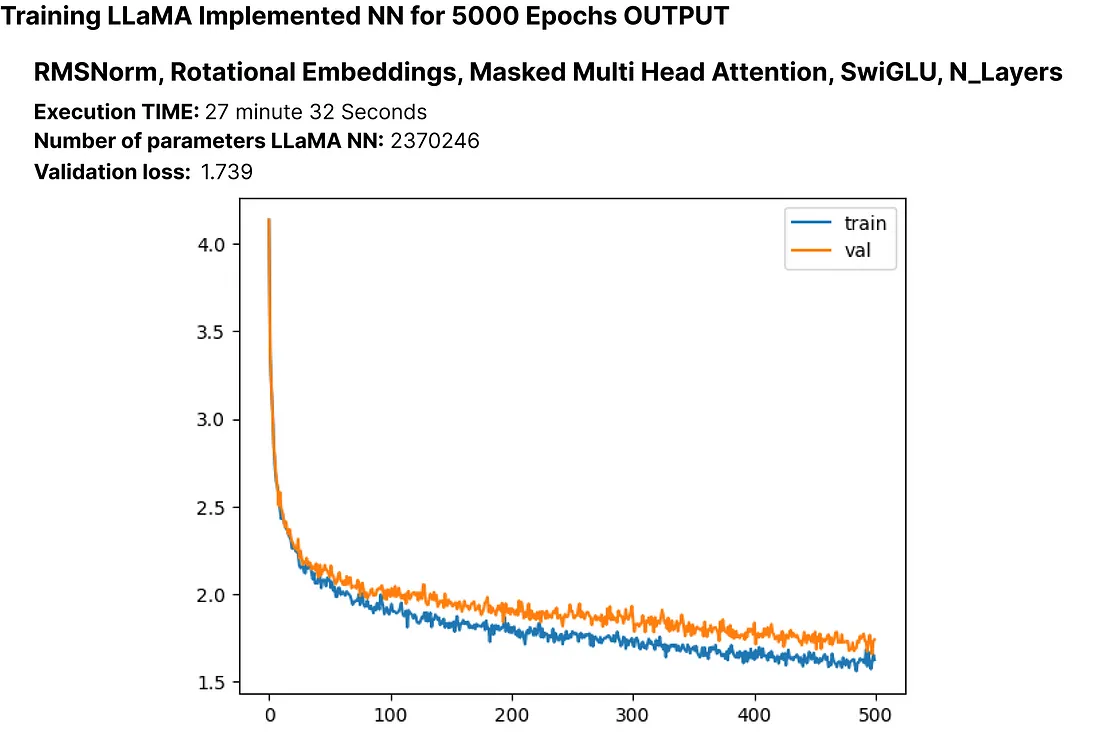

让我们执行修改后的LLaMA模型,加入了RMSNorm、旋转嵌入、蒙版多头注意力、SwiGLU以及N层,以观察模型中更新后的参数数量及损失。

# Create an instance of RopeModel (RMSNorm, RoPE, Multi-Head, SwiGLU, N_layers)

llama = Llama(MASTER_CONFIG)

# Obtain batches for training

xs, ys = get_batches(dataset, 'train', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Calculate logits and loss using the model

logits, loss = llama(xs, ys)

# Define the Adam optimizer for model parameters

optimizer = torch.optim.Adam(llama.parameters())

# Train the model

train(llama, optimizer)

虽然存在过拟合的可能性,但探索增加训练轮数是否会进一步减少损失是至关重要的。此外,请注意,我们当前的大型语言模型(LLM)有超过200万个参数。

让我们为它训练更多的训练轮数吧。

# Update the number of epochs in the configuration

MASTER_CONFIG.update({

'epochs': 10000,

})

# Train the LLaMA model for the specified number of epochs

train(llama, optimizer, scheduler=None, config=MASTER_CONFIG)

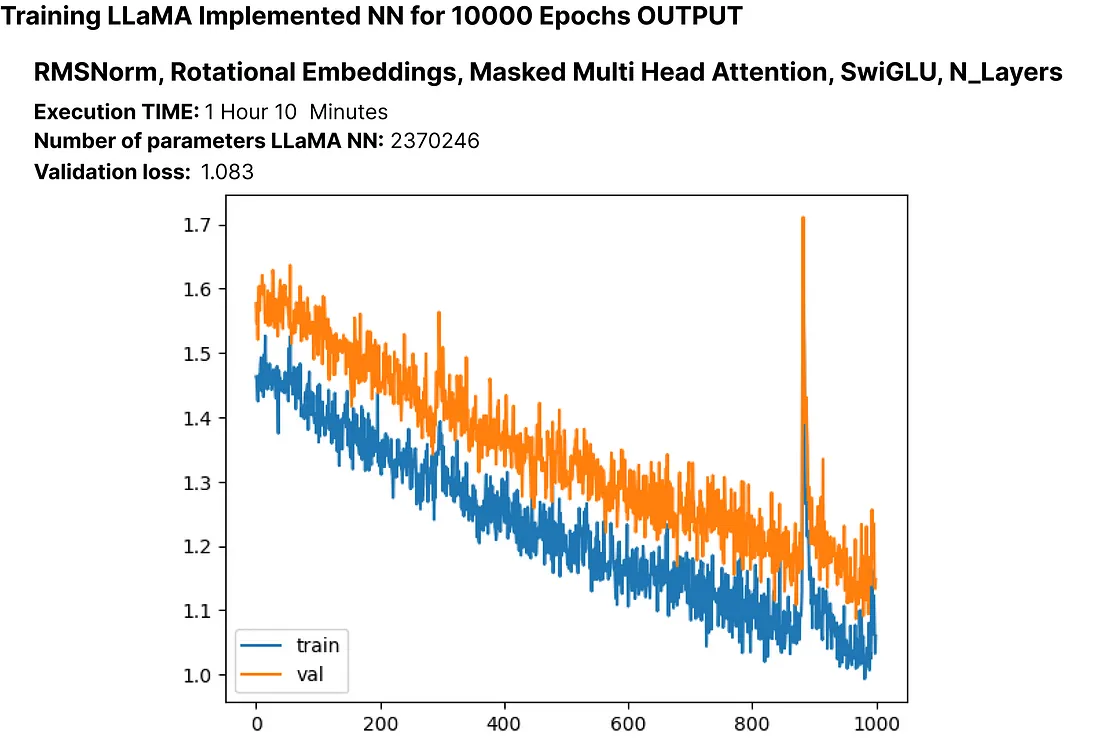

这里的损失是1.08,我们可以在不遇到显著过拟合的情况下实现更低的损失。这表明模型表现良好。

让我们再次训练模型,这次加入一个调度器。

# Training the model again, scheduler for better optimization.

train(llama, optimizer, config=MASTER_CONFIG)

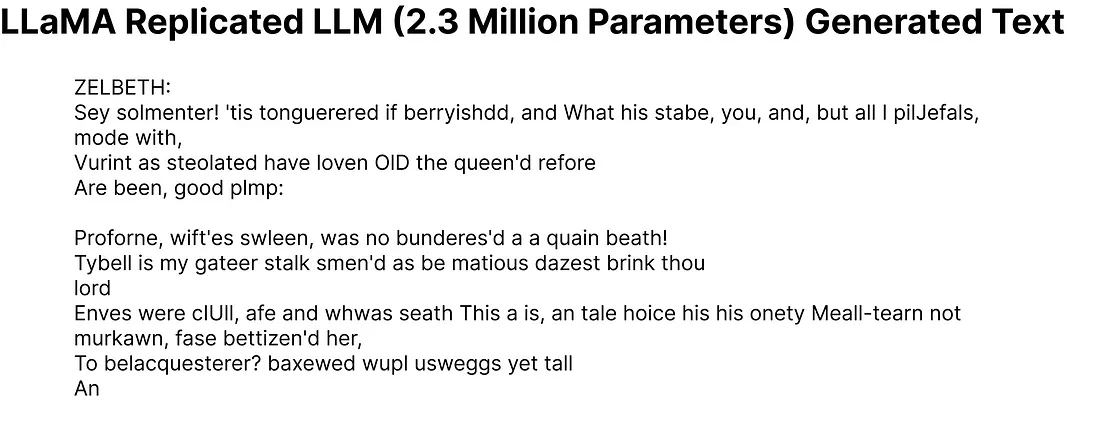

到目前为止,我们已经在自定数据集上成功实施了缩小版的LLaMA架构。现在,让我们来检查一下我们这个拥有200万参数的语言模型生成的输出。

# Generate text using the trained LLM (llama) with a maximum of 500 tokens

generated_text = generate(llama, MASTER_CONFIG, 500)[0]

print(generated_text)

即使生成的一些单词可能不是完美的英语,我们的大型语言模型(LLM)只有200万参数,但已经显示出对英语的基本理解。

现在,让我们看看我们的模型在测试集上的表现如何。

# Get batches from the test set

xs, ys = get_batches(dataset, 'test', MASTER_CONFIG['batch_size'], MASTER_CONFIG['context_window'])

# Pass the test data through the LLaMA model

logits, loss = llama(xs, ys)

# Print the loss on the test set

print(loss)

在测试集上计算的损失大约为1.236。

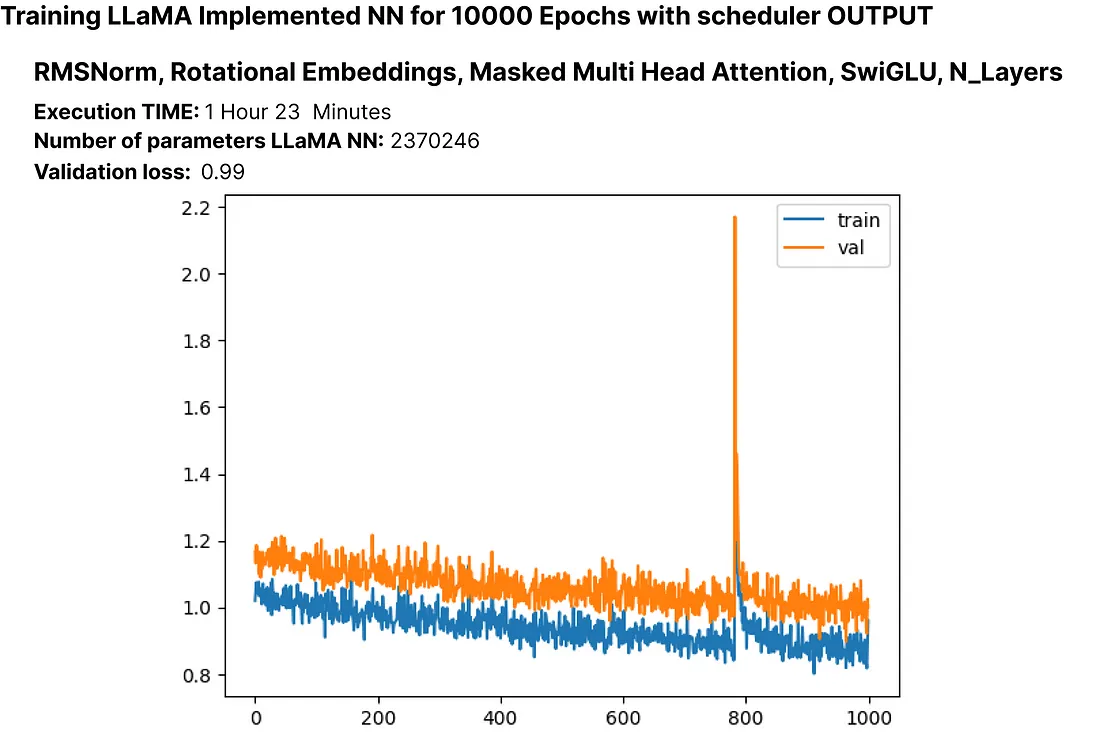

实验超参数

在训练神经网络时,调整超参数是至关重要的一步。在原始的Llama论文中,作者使用了余弦退火学习计划。然而,在我们的实验中,它表现得并不好。以下是使用不同学习计划进行超参数实验的一个例子:

# Update configuration

MASTER_CONFIG.update({

"epochs": 1000

})

# Create Llama model with Cosine Annealing learning schedule

llama_with_cosine = Llama(MASTER_CONFIG)

# Define Adam optimizer with specific hyperparameters

llama_optimizer = torch.optim.Adam(

llama.parameters(),

betas=(.9, .95),

weight_decay=.1,

eps=1e-9,

lr=1e-3

)

# Define Cosine Annealing learning rate scheduler

scheduler = torch.optim.lr_scheduler.CosineAnnealingLR(llama_optimizer, 300, eta_min=1e-5)

# Train the Llama model with the specified optimizer and scheduler

train(llama_with_cosine, llama_optimizer, scheduler=scheduler)

保存语言模型

你可以使用以下方法保存整个语言模型(LLM)或仅保存参数:

# Save the entire model

torch.save(llama, 'llama_model.pth')

# If you want to save only the model parameters

torch.save(llama.state_dict(), 'llama_model_params.pth')

要为 Hugging Face 的 Transformers 库保存你的 PyTorch 模型,你可以使用 save_pretrained 方法。以下是一个例子:

from transformers import GPT2LMHeadModel, GPT2Config

# Assuming Llama is your PyTorch model

llama_config = GPT2Config.from_dict(MASTER_CONFIG)

llama_transformers = GPT2LMHeadModel(config=llama_config)

llama_transformers.load_state_dict(llama.state_dict())

# Specify the directory where you want to save the model

output_dir = "llama_model_transformers"

# Save the model and configuration

llama_transformers.save_pretrained(output_dir)

GPT2Config 用于创建一个与 GPT-2 兼容的配置对象。接着,创建一个 GPT2LMHeadModel 并加载你的 Llama 模型的权重。最后,调用 save_pretrained 将模型和配置保存在指定目录中。

然后你可以使用 Transformers 库来加载这个模型。

from transformers import GPT2LMHeadModel, GPT2Config

# Specify the directory where the model was saved

output_dir = "llama_model_transformers"

# Load the model and configuration

llama_transformers = GPT2LMHeadModel.from_pretrained(output_dir)

结论

在本文中,我们逐步介绍了如何实施LLaMA方法来构建自己的小型语言模型(LLM)。