如何构建用于从收据中提取信息的生成式人工智能工具

纸质收据有各种样式和格式,是自动信息提取的一个有趣目标。纸质收据还提供了大量的分项费用,如果将这些费用汇总到数据库中,对于任何有兴趣追踪比银行对账单更详细的消费情况的人来说都非常有用。

如果你能拍一张收据照片,上传到某个应用程序,然后提取其中的信息并将其添加到你的个人支出数据库中,再用自然语言进行查询,那岂不是很酷?然后你就可以对这些数据提出问题,比如 "我上次去宜家买了什么?"或者 "我在 Safeway 花的钱最多的是什么商品"。这样的系统还可以自然而然地扩展到企业财务和支出跟踪。在本文中,我们将构建一个简单的应用程序来处理这一过程的第一部分--即从收据中提取信息并存储到数据库中。我们的系统将监控 Google Drive 文件夹中的新收据,处理它们并将结果附加到 .csv 文件中。

1. 背景和动机

从技术上讲,我们要做的是一种称为模板填充的自动信息提取。我们有一个预定义的字段模式,我们想从收据中提取这些字段,任务就是填写这些字段,或者在适当的地方留空。这里的一个主要问题是,收据的图像或扫描件中包含的信息是非结构化的,虽然光学字符识别 (OCR) 或 PDF 文本提取库在查找文本方面可能做得不错,但它们不能很好地保存单词在文档中的相对位置,例如,这可能会导致很难将物品的价格与成本相匹配。

传统上,这个问题是通过模板匹配来解决的,即创建一个预定义的文档几何模板,然后只在已知包含重要信息的区域进行提取。

为了解决这个问题,AWS Textract 和 AWS Rekognition 等更先进的服务结合使用预先训练好的深度学习模型来进行对象检测、边界框生成和命名实体识别(NER)。我还没有就手头的问题实际试用过这些服务,但如果能将其结果与我们使用 OpenAI 的 LLM构建的结果进行比较,那将会非常有趣。

大型语言模型(LLM),如 gpt-3.5-turbo,也非常擅长从非结构化文本中提取信息和填充模板,尤其是在提示中给出一些示例之后。这使得它们比模板匹配或微调灵活得多,因为添加几个新收据格式的示例比重新训练模型或构建一个新的几何模板要快得多,也便宜得多。

如果我们要在从收据中提取的文本上使用 gpt-3.5-turbo,那么问题就来了,我们该如何构建收据学习的示例呢?我们当然可以手动完成这项工作,但这并不能很好地扩展。在这里,我们将探索使用 gpt-4-vision 来实现这一目标。这个版本的 gpt-4 可以处理包含图像的对话,而且似乎特别擅长描述图像的内容。因此,如果给定一张收据图像和我们想要提取的关键信息的描述,只要图像足够清晰,gpt-4-vision 就能一气呵成。

2. 连接到谷歌硬盘

我们需要一个方便的地方来存储原始收据数据。Google Drive 是一个不错的选择,它提供了一个 Python API,使用起来相对简单。我使用 GeniusScan 应用程序捕获收据,它可以将 .pdf、.jpeg 或其他文件类型从手机直接上传到 Google Drive 文件夹。该应用还能进行一些有用的预处理,如自动裁剪文件,这有助于提取过程。

要设置对 Google Drive 的 API 访问,你需要创建服务帐户凭据,可按照此处的说明生成。作为参考,我在驱动器中创建了一个名为 "receiptchat "的文件夹,并设置了一个密钥对,以便从该文件夹中读取数据。

以下代码可用于设置驱动器服务对象,该对象可让你使用各种方法查询 Google Drive

import os

from googleapiclient.discovery import build

from oauth2client.service_account import ServiceAccountCredentials

class GoogleDriveService:

SCOPES = ["https://www.googleapis.com/auth/drive"]

def __init__(self):

# the directory where your credentials are stored

base_path = os.path.dirname(os.path.dirname(os.path.dirname(__file__)))

# The name of the file containing your credentials

credential_path = os.path.join(base_path, "gdrive_credential.json")

os.environ["GOOGLE_APPLICATION_CREDENTIALS"] = credential_path

def build(self):

# Get credentials into the desired format

creds = ServiceAccountCredentials.from_json_keyfile_name(

os.getenv("GOOGLE_APPLICATION_CREDENTIALS"), self.SCOPES

)

# Set up the Gdrive service object

service = build("drive", "v3", credentials=creds, cache_discovery=False)

return service

在我们的简单应用程序中,我们只需要做两件事: 列出驱动器文件夹中的所有文件并下载其中的一些文件。下面的类可以处理这些工作:

import io

from googleapiclient.errors import HttpError

from googleapiclient.http import MediaIoBaseDownload

import googleapiclient.discovery

from typing import List

class GoogleDriveLoader:

# These are the types of files we want to download

VALID_EXTENSIONS = [".pdf", ".jpeg"]

def __init__(self, service: googleapiclient.discovery.Resource):

self.service = service

def search_for_files(self) -> List:

"""

See https://developers.google.com/drive/api/guides/search-files#python

"""

# This query searches for objects that are not folders and

# contain the valid extensions

query = "mimeType != 'application/vnd.google-apps.folder' and ("

for i, ext in enumerate(self.VALID_EXTENSIONS):

if i == 0:

query += "name contains '{}' ".format(ext)

else:

query += "or name contains '{}' ".format(ext)

query = query.rstrip()

query += ")"

# create drive api client

files = []

page_token = None

try:

while True:

response = (

self.service.files()

.list(

q=query,

spaces="drive",

fields="nextPageToken, files(id, name)",

pageToken=page_token,

)

.execute()

)

for file in response.get("files"):

# Process change

print(f'Found file: {file.get("name")}, {file.get("id")}')

file_id = file.get("id")

file_name = file.get("name")

files.append(

{

"id": file_id,

"name": file_name,

}

)

page_token = response.get("nextPageToken", None)

if page_token is None:

break

except HttpError as error:

print(f"An error occurred: {error}")

files = None

return files

def download_file(self, real_file_id: str) -> bytes:

"""

Downloads a single file

"""

try:

file_id = real_file_id

request = self.service.files().get_media(fileId=file_id)

file = io.BytesIO()

downloader = MediaIoBaseDownload(file, request)

done = False

while done is False:

status, done = downloader.next_chunk()

print(f"Download {int(status.progress() * 100)}.")

except HttpError as error:

print(f"An error occurred: {error}")

file = None

return file.getvalue()

运行后结果如下:

service = GoogleDriveService().build()

loader = GoogleDriveLoader(service)

all_files loader.search_for_files() #returns a list of unqiue file ids and names

pdf_bytes = loader.download_file({some_id}) #returns bytes for that file

现在我们可以连接 Google Drive,将图片或 pdf 数据导入本地计算机。接下来,我们必须对其进行处理并提取文本。

3. 从 .pdf 和图像中提取原始文本

有多个文档齐全的开源库可以从 pdf 和图像中提取原始文本。对于 pdf,我们将在此使用 PyPDF,如果想更全面地了解类似的软件包。对于 jpeg 格式的图像,我们将使用 pytesseract,它是 tesseract OCR 引擎的封装程序。安装说明请点击此处。最后,我们还希望能将 pdf 转换成 jpeg 格式。这可以通过 pdf2image 软件包来实现。

PyPDF 和 pytesseract 都提供了从文档中提取文本的高级方法。例如,pytesseract 可以同时提取文本和边界框(见此处),如果我们将来想向 LLM 提供更多关于其处理的文本的收据格式的信息,这可能会很有用。pdf2image 提供了一种将 pdf 字节转换为 jpeg 图像的方法,而这正是我们要做的。要将 jpeg 字节转换为可视化图像,我们将使用 PIL 软件包。

from abc import ABC, abstractmethod

from pdf2image import convert_from_bytes

import numpy as np

from PyPDF2 import PdfReader

from PIL import Image

import pytesseract

import io

DEFAULT_DPI = 50

class FileBytesToImage(ABC):

@staticmethod

@abstractmethod

def convert_bytes_to_jpeg(file_bytes):

raise NotImplementedError

@staticmethod

@abstractmethod

def convert_bytes_to_text(file_bytes):

raise NotImplementedError

class PDFBytesToImage(FileBytesToImage):

@staticmethod

def convert_bytes_to_jpeg(file_bytes, dpi=DEFAULT_DPI, return_array=False):

jpeg_data = convert_from_bytes(file_bytes, fmt="jpeg", dpi=dpi)[0]

if return_array:

jpeg_data = np.asarray(jpeg_data)

return jpeg_data

@staticmethod

def convert_bytes_to_text(file_bytes):

pdf_data = PdfReader(

stream=io.BytesIO(initial_bytes=file_bytes)

)

# receipt data should only have one page

page = pdf_data.pages[0]

return page.extract_text()

class JpegBytesToImage(FileBytesToImage):

@staticmethod

def convert_bytes_to_jpeg(file_bytes, dpi=DEFAULT_DPI, return_array=False):

jpeg_data = Image.open(io.BytesIO(file_bytes))

if return_array:

jpeg_data = np.array(jpeg_data)

return jpeg_data

@staticmethod

def convert_bytes_to_text(file_bytes):

jpeg_data = Image.open(io.BytesIO(file_bytes))

text_data = pytesseract.image_to_string(image=jpeg_data, nice=1)

return text_data

上面的代码使用了抽象基类的概念来提高可扩展性。假设我们将来想添加对另一种文件类型的支持。如果我们编写关联类并从 FileBytesToImage 继承,我们就不得不在其中编写 convert_bytes_too_image 和 convert_bytes_too_text 方法。这样,在大型应用程序中,我们的类就不太可能在下游引入错误。

代码的使用方法如下:

bytes_to_image = PDFBytesToImage()

image = PDFBytesToImage.convert_bytes_to_jpeg(pdf_bytes)

text = PDFBytesToImage.convert_bytes_to_jpeg(pdf_bytes)

4. 使用 gpt-4-vision 提取信息

现在,让我们使用 Langchain 来促使 gpt-4-vision 从我们的收据中提取一些信息。首先,我们可以使用 Langchain 对 Pydantic 的支持为输出创建一个模型。

from langchain_core.pydantic_v1 import BaseModel, Field

from typing import List

class ReceiptItem(BaseModel):

"""Information about a single item on a reciept"""

item_name: str = Field("The name of the purchased item")

item_cost: str = Field("The cost of the item")

class ReceiptInformation(BaseModel):

"""Information extracted from a receipt"""

vendor_name: str = Field(

description="The name of the company who issued the reciept"

)

vendor_address: str = Field(

description="The street address of the company who issued the reciept"

)

datetime: str = Field(

description="The date and time that the receipt was printed in MM/DD/YY HH:MM format"

)

items_purchased: List[ReceiptItem] = Field(description="List of purchased items")

subtotal: str = Field(description="The total cost before tax was applied")

tax_rate: str = Field(description="The tax rate applied")

total_after_tax: str = Field(description="The total cost after tax")

这一点非常强大,因为 Langchain 可以使用这个 Pydantic 模型来为 LLM 构建格式指令,这些指令可以包含在提示中,以强制它生成带有指定字段的 json 输出。添加新字段就像更新模型类一样简单。

接下来,让我们构建一个静态的提示符:

from dataclasses import dataclass

@dataclass

class VisionReceiptExtractionPrompt:

template: str = """

You are an expert at information extraction from images of receipts.

Given this of a receipt, extract the following information:

- The name and address of the vendor

- The names and costs of each of the items that were purchased

- The date and time that the receipt was issued. This must be formatted like 'MM/DD/YY HH:MM'

- The subtotal (i.e. the total cost before tax)

- The tax rate

- The total cost after tax

Do not guess. If some information is missing just return "N/A" in the relevant field.

If you determine that the image is not of a receipt, just set all the fields in the formatting instructions to "N/A".

You must obey the output format under all circumstances. Please follow the formatting instructions exactly.

Do not return any additional comments or explanation.

"""

现在,我们需要创建一个类,它将接收图像并将图像连同提示和格式说明一起发送给 LLM。

from langchain.chains import TransformChain

from langchain_core.messages import HumanMessage

from langchain_core.runnables import chain

from langchain_core.output_parsers import JsonOutputParser

import base64

from langchain.callbacks import get_openai_callback

class VisionReceiptExtractionChain:

def __init__(self, llm):

self.llm = llm

self.chain = self.set_up_chain()

@staticmethod

def load_image(path: dict) -> dict:

"""Load image and encode it as base64."""

def encode_image(path):

with open(path, "rb") as image_file:

return base64.b64encode(image_file.read()).decode("utf-8")

image_base64 = encode_image(path["image_path"])

return {"image": image_base64}

def set_up_chain(self):

extraction_model = self.llm

prompt = VisionReceiptExtractionPrompt()

parser = JsonOutputParser(pydantic_object=ReceiptInformation)

load_image_chain = TransformChain(

input_variables=["image_path"],

output_variables=["image"],

transform=self.load_image,

)

# build custom chain that includes an image

@chain

def receipt_model_chain(inputs: dict) -> dict:

"""Invoke model"""

msg = extraction_model.invoke(

[

HumanMessage(

content=[

{"type": "text", "text": prompt.template},

{"type": "text", "text": parser.get_format_instructions()},

{

"type": "image_url",

"image_url": {

"url": f_"data:image/jpeg;base64,{inputs['image']}"

},

},

]

)

]

)

return msg.content

return load_image_chain | receipt_model_chain | JsonOutputParser()

def run_and_count_tokens(self, input_dict: dict):

with get_openai_callback() as cb:

result = self.chain.invoke(input_dict)

return result, cb

请注意,我们还使用了 openai 回调来计算每次调用相关的令牌和花费。

要运行此程序,我们可以执行以下操作:

from langchain_openai import ChatOpenAI

from tempfile import NamedTemporaryFile

model = ChatOpenAI(

api_key={your open_ai api key},

temperature=0, model="gpt-4-vision-preview",

max_tokens=1024

)

extractor = VisionReceiptExtractionChain(model)

# image from PDFBytesToImage.convert_bytes_to_jpeg()

prepared_data = {

"image": image

}

with NamedTemporaryFile(suffix=".jpeg") as temp_file:

prepared_data["image"].save(temp_file.name)

res, cb = extractor.run_and_count_tokens(

{"image_path": temp_file.name}

)

根据上述随机文件,结果如下所示:

{'vendor_name': 'N/A',

'vendor_address': 'N/A',

'datetime': 'N/A',

'items_purchased': [],

'subtotal': 'N/A',

'tax_rate': 'N/A',

'total_after_tax': 'N/A'}虽然不太令人兴奋,但至少其结构是正确的!当提供有效收据时,这些字段就会被填写,我对不同收据进行了几次测试,结果表明它非常准确。

我们的回调是这样的:

Tokens Used: 1170

Prompt Tokens: 1104

Completion Tokens: 66

Successful Requests: 1

Total Cost (USD): $0.01302

这对跟踪成本至关重要,因为在测试类似 gpt-4 的模型时,成本可能会迅速增长。

5. 使用 gpt-3.5-turbo 提取信息

假设我们使用第 4 部分中的步骤生成了一些示例,并将其保存为 json 文件。每个示例都包含一些提取的文本和我们的 ReceiptInformation Pydantic 模型所定义的相应关键信息。现在,我们要将这些示例注入到 gpt-3.5-turbo 的调用中,希望它能将从这些示例中学到的知识推广到新的收据中。快速学习是提示工程中的一个强大工具,如果它能发挥作用,将非常适合本使用案例,因为每当检测到一种新的收据格式时,我们就可以使用 gpt-4-vision 生成一个示例,并将其添加到用于提示 gpt-3.5-turbo 的示例列表中。然后,当出现类似格式的收据时,就可以使用 gpt-3.5-turbo 提取其内容。在某种程度上,这就像模板匹配,但无需手动定义模板。

有很多方法可以鼓励基于文本的 LLM 从文本块中提取结构化信息。我在 Langchain 文档中发现了一种最新、最强大的方法。其原理是创建一个包含一些示例占位符的提示符,然后将示例注入提示符中,就好像这些示例是由 LLM 调用的某个函数返回的一样。这是通过 model.with_structured_output() 功能实现的。

让我们看看代码是如何实现的。我们首先编写提示。

from langchain_core.prompts import ChatPromptTemplate, MessagesPlaceholder

@dataclass

class TextReceiptExtractionPrompt:

system: str = """

You are an expert at information extraction from images of receipts.

Given this of a receipt, extract the following information:

- The name and address of the vendor

- The names and costs of each of the items that were purchased

- The date and time that the receipt was issued. This must be formatted like 'MM/DD/YY HH:MM'

- The subtotal (i.e. the total cost before tax)

- The tax rate

- The total cost after tax

Do not guess. If some information is missing just return "N/A" in the relevant field.

If you determine that the image is not of a receipt, just set all the fields in the formatting instructions to "N/A".

You must obey the output format under all circumstances. Please follow the formatting instructions exactly.

Do not return any additional comments or explanation.

"""

prompt: ChatPromptTemplate = ChatPromptTemplate.from_messages(

[

(

"system",

system,

),

MessagesPlaceholder("examples"),

("human", "{input}"),

]

)

提示文本与第 4 节中的文本完全相同,只是我们现在有了一个 MessagesPlaceholder 来放置我们要插入的示例。

class Example(TypedDict):

"""A representation of an example consisting of text input and expected tool calls.

For extraction, the tool calls are represented as instances of pydantic model.

"""

input: str

tool_calls: List[BaseModel]

class TextReceiptExtractionChain:

def __init__(self, llm, examples: List):

self.llm = llm

self.raw_examples = examples

self.prompt = TextReceiptExtractionPrompt()

self.chain, self.examples = self.set_up_chain()

@staticmethod

def tool_example_to_messages(example: Example) -> List[BaseMessage]:

"""Convert an example into a list of messages that can be fed into an LLM.

This code is an adapter that converts our example to a list of messages

that can be fed into a chat model.

The list of messages per example corresponds to:

1) HumanMessage: contains the content from which content should be extracted.

2) AIMessage: contains the extracted information from the model

3) ToolMessage: contains confirmation to the model that the model requested a tool correctly.

The ToolMessage is required because some of the chat models are hyper-optimized for agents

rather than for an extraction use case.

"""

messages: List[BaseMessage] = [HumanMessage(content=example["input"])]

openai_tool_calls = []

for tool_call in example["tool_calls"]:

openai_tool_calls.append(

{

"id": str(uuid.uuid4()),

"type": "function",

"function": {

# The name of the function right now corresponds

# to the name of the pydantic model

# This is implicit in the API right now,

# and will be improved over time.

"name": tool_call.__class__.__name__,

"arguments": tool_call.json(),

},

}

)

messages.append(

AIMessage(content="", additional_kwargs={"tool_calls": openai_tool_calls})

)

tool_outputs = example.get("tool_outputs") or [

"You have correctly called this tool."

] * len(openai_tool_calls)

for output, tool_call in zip(tool_outputs, openai_tool_calls):

messages.append(ToolMessage(content=output, tool_call_id=tool_call["id"]))

return messages

def set_up_examples(self):

examples = [

(

example["input"],

ReceiptInformation(

vendor_name=example["output"]["vendor_name"],

vendor_address=example["output"]["vendor_address"],

datetime=example["output"]["datetime"],

items_purchased=[

ReceiptItem(

item_name=example["output"]["items_purchased"][i][

"item_name"

],

item_cost=example["output"]["items_purchased"][i][

"item_cost"

],

)

for i in range(len(example["output"]["items_purchased"]))

],

subtotal=example["output"]["subtotal"],

tax_rate=example["output"]["tax_rate"],

total_after_tax=example["output"]["total_after_tax"],

),

)

for example in self.raw_examples

]

messages = []

for text, tool_call in examples:

messages.extend(

self.tool_example_to_messages(

{"input": text, "tool_calls": [tool_call]}

)

)

return messages

def set_up_chain(self):

extraction_model = self.llm

prompt = self.prompt.prompt

examples = self.set_up_examples()

runnable = prompt | extraction_model.with_structured_output(

schema=ReceiptInformation,

method="function_calling",

include_raw=False,

)

return runnable, examples

def run_and_count_tokens(self, input_dict: dict):

# inject the examples here

input_dict["examples"] = self.examples

with get_openai_callback() as cb:

result = self.chain.invoke(input_dict)

return result, cb

TextReceiptExtractionChain 将接收一个示例列表,每个示例都有输入和输出键(请注意 set_up_examples 方法是如何使用这些键的)。我们将为每个示例创建一个 ReceiptInformation 对象。然后,我们将结果格式化为可传入提示符的信息列表。tool_examples_to_messages 中的所有工作都是为了在不同的 Langchain 格式之间进行转换。

运行这个过程与我们在视觉模型中的操作非常相似:

# Load the examples

EXAMPLES_PATH = "receiptchat/datasets/example_extractions.json"

with open(EXAMPLES_PATH) as f:

loaded_examples = json.load(f)

loaded_examples = [

{"input": x["file_details"]["extracted_text"], "output": x}

for x in loaded_examples

]

# Set up the LLM caller

llm = ChatOpenAI(

api_key=secrets["OPENAI_API_KEY"],

temperature=0,

model="gpt-3.5-turbo"

)

extractor = TextReceiptExtractionChain(llm, loaded_examples)

# convert a PDF file form Google Drive into text

text = PDFBytesToImage.convert_bytes_to_text(downloaded_data)

extracted_information, cb = extractor.run_and_count_tokens(

{"input": text}

)

即使有 10 个示例,该调用的成本也不到 gpt-4-vision 的一半,而且返回速度也更快。随着示例数量的增加,你可能需要使用 gpt-3.5-turbo-16k 来避免超出上下文窗口。

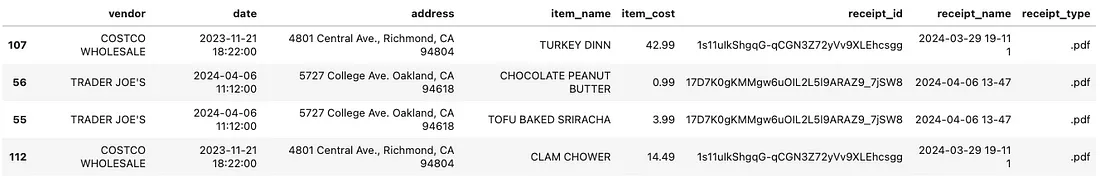

输出数据集

收集了一些收据后,你可以运行第 4 和第 5 节中描述的提取方法,并将结果收集到一个数据帧中。然后将其存储起来,每当 Google Drive 中出现新的收据时,就可以对其进行追加。