使用huggingface transformer进行微调

一般来说,完全微调方法会更新所有模型参数,因此在训练时,我们需要将所有参数加载到内存中。

以下是完全微调的限制

- 使用六倍的模型内存进行训练

- 遭受灾难性遗忘——忘记原始模型的学习

- 需要更多时间和计算预算

在这篇文章中,我们将对模型进行完全微调,并与原始模型进行比较评估,然后在下一部分中使用参数有效方法对模型进行微调。

让我们开始实施并根据要求设置环境。

pip install --upgrade pip

pip install --disable-pip-version-check \

torch==1.13.1 \

torchdata==0.5.1 --quiet

pip install -U transformers

pip install -U datasets

pip install evaluate==0.4.0 \

rouge_score==0.1.2 \

loralib==0.1.1 \

peft==0.3.0 --quiet

导入所有必要组件

from datasets import load_dataset

from transformers import AutoModelForSeq2SeqLM, AutoModelForCausalLM, AutoTokenizer, GenerationConfig, TrainingArguments, Trainer

import torch

import time

import evaluate

import pandas as pd

import numpy as np

要训练任何模型,我们都需要数据集和试图训练模型的任务。在本例中,我将对 FLAN-T5 模型在有 LoRA 和没有 LoRA 的情况下的摘要任务训练进行区分。首先,让我们从 huggingface 中加载对话的开源摘要数据。

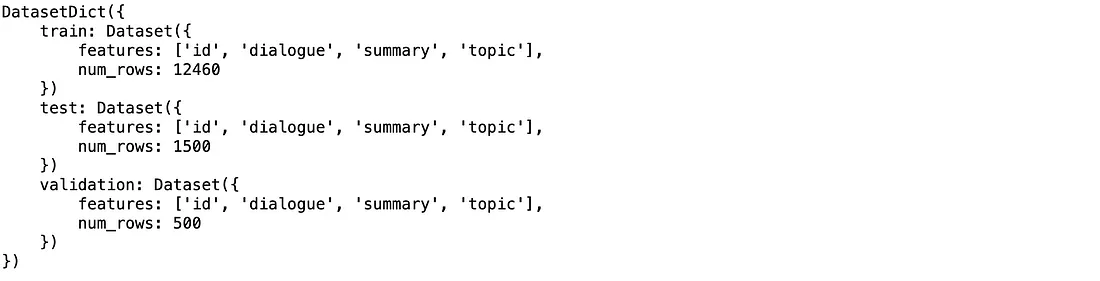

huggingface_dataset_name = "knkarthick/dialogsum"

dataset = load_dataset(huggingface_dataset_name)

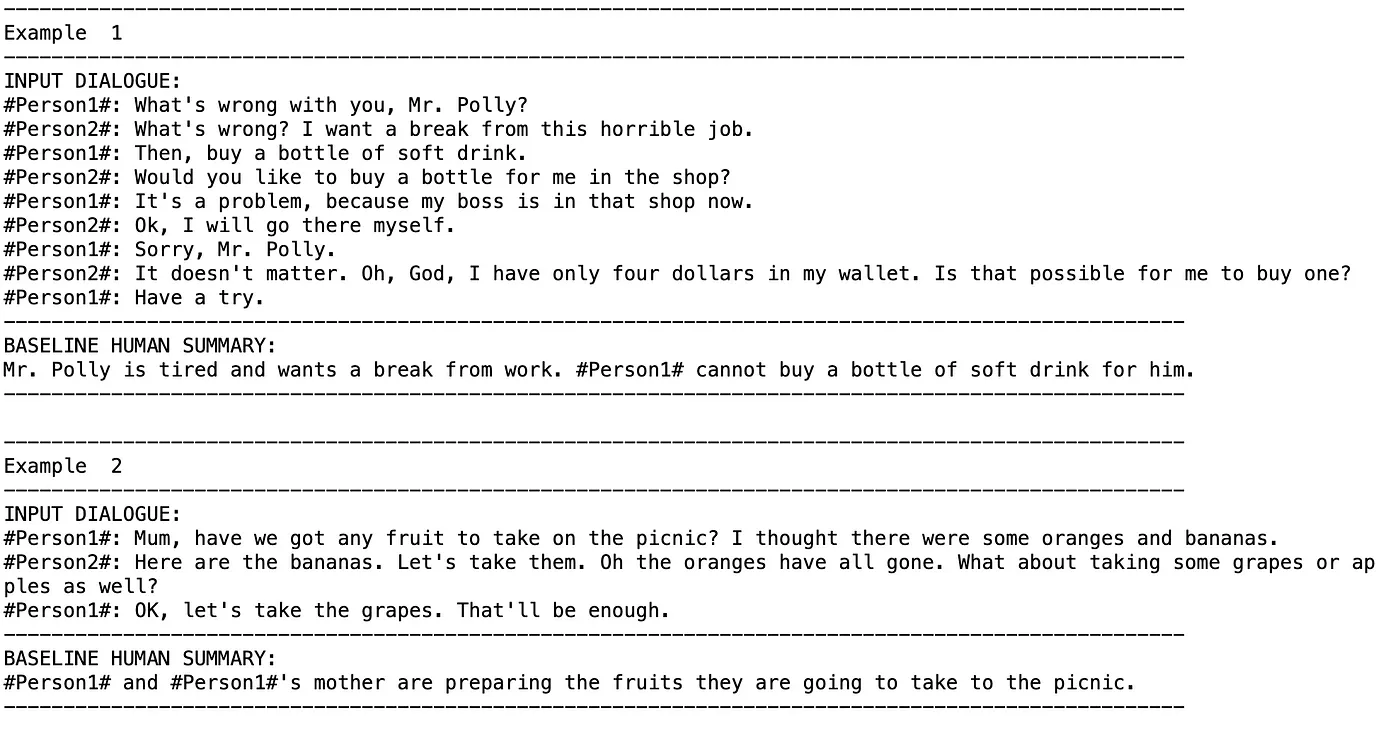

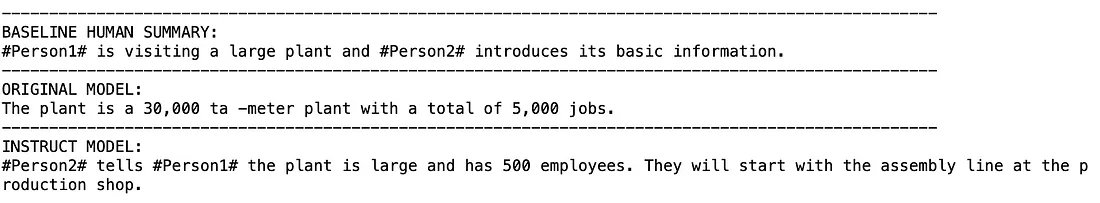

可视化数据集

example_indices = [90, 270]

dash_line = '-'.join('' for x in range(100))

for i, index in enumerate(example_indices):

print(dash_line)

print('Example ', i + 1)

print(dash_line)

print('INPUT DIALOGUE:')

print(dataset['test'][index]['dialogue'])

print(dash_line)

print('BASELINE HUMAN SUMMARY:')

print(dataset['test'][index]['summary'])

print(dash_line)

print()

如果不使用 LoRA,则加载模型进行训练,并直观显示需要训练的参数数量、

model_name='google/flan-t5-base'

original_model = AutoModelForSeq2SeqLM.from_pretrained(model_name,

torch_dtype=torch.bfloat16,

device_map='auto')

tokenizer = AutoTokenizer.from_pretrained(model_name)

def print_number_of_trainable_model_parameters(model):

trainable_model_params = 0

all_model_params = 0

for _, param in model.named_parameters():

all_model_params += param.numel()

if param.requires_grad:

trainable_model_params += param.numel()

return f"trainable model parameters: {trainable_model_params}\nall model parameters: {all_model_params}\npercentage of trainable model parameters: {100 * trainable_model_params / all_model_params:.2f}%"

print(print_number_of_trainable_model_parameters(original_model))

trainable model parameters: 247577856

all model parameters: 247577856

percentage of trainable model parameters: 100.00%

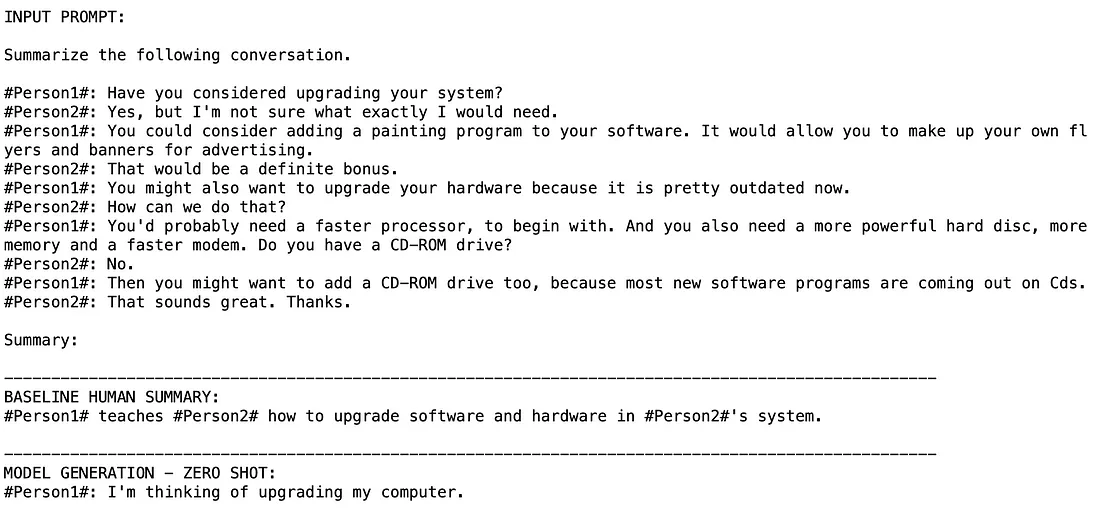

测试仅针对用例进行推理的模型

index = 200

dialogue = dataset['test'][index]['dialogue']

summary = dataset['test'][index]['summary']

prompt = f"""

Summarize the following conversation.

{dialogue}

Summary:

"""

inputs = tokenizer(prompt, return_tensors='pt')

output = tokenizer.decode(

original_model.generate(

inputs["input_ids"],

max_new_tokens=200,

)[0],

skip_special_tokens=True

)

dash_line = '-'.join('' for x in range(100))

print(dash_line)

print(f'INPUT PROMPT:\n{prompt}')

print(dash_line)

print(f'BASELINE HUMAN SUMMARY:\n{summary}\n')

print(dash_line)

print(f'MODEL GENERATION - ZERO SHOT:\n{output}')

让我们尝试进行全面微调、

# preprocess the prompt-response dataset into tokens and pull out their input_ids (1 per token).

def tokenize_function(example):

start_prompt = 'Summarize the following article.\n\n'

end_prompt = '\n\nSummary: '

prompt = [start_prompt + story + end_prompt for story in example["story"]]

example['input_ids'] = tokenizer(prompt, padding="max_length", truncation=True, return_tensors="pt").input_ids

example['labels'] = tokenizer(example["summary"], padding="max_length", truncation=True, return_tensors="pt").input_ids

return example

# The dataset actually contains 3 diff splits: train, validation, test.

# The tokenize_function code is handling all data across all splits in batches.

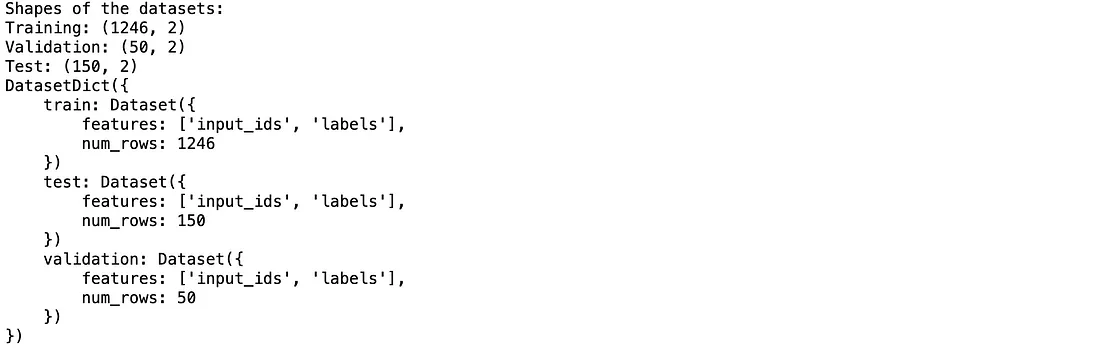

tokenized_datasets = dataset.map(tokenize_function, batched=True)

tokenized_datasets = tokenized_datasets.remove_columns(['id', 'topic', 'dialogue', 'summary',])

#To save some time in the lab, you will subsample the dataset

tokenized_datasets = tokenized_datasets.filter(lambda example, index: index % 10 == 0, with_indices=True)

print(f"Shapes of the datasets:")

print(f"Training: {tokenized_datasets['train'].shape}")

print(f"Test: {tokenized_datasets['test'].shape}")

print(tokenized_datasets)

output_dir = f'./dialogue-summary-training-{str(int(time.time()))}'

training_args = TrainingArguments(

output_dir=output_dir,

learning_rate=1e-5,

num_train_epochs=1,

weight_decay=0.01,

logging_steps=1,

max_steps=1,

save_strategy='epoch'

)

trainer = Trainer(

model=original_model,

args=training_args,

train_dataset=tokenized_datasets['train'],

eval_dataset=tokenized_datasets['validation']

)

trainer.train() #this will throw out of memory error due to memory constraints

上述步骤可能会导致内存错误,或者将模型保存为 1GB,这取决于您的 GPU 配置。我在 32GB GPU 机器上训练了该模型,并测试了模型的性能,如下所示:

instruct_model = AutoModelForSeq2SeqLM.from_pretrained("./flan-dialogue-summary-checkpoint", torch_dtype=torch.bfloat16)

index = 210

dialogue = dataset['test'][index]['dialogue']

human_baseline_summary = dataset['test'][index]['summary']

prompt = f"""

Summarize the following conversation.

{dialogue}

Summary:

"""

input_ids = tokenizer(prompt, return_tensors="pt").input_ids

original_model_outputs = original_model.generate(input_ids=input_ids, generation_config=GenerationConfig(max_new_tokens=200, num_beams=1))

original_model_text_output = tokenizer.decode(original_model_outputs[0], skip_special_tokens=True)

instruct_model_outputs = instruct_model.generate(input_ids=input_ids, generation_config=GenerationConfig(max_new_tokens=200, num_beams=1))

instruct_model_text_output = tokenizer.decode(instruct_model_outputs[0], skip_special_tokens=True)

print(dash_line)

print(f'BASELINE HUMAN SUMMARY:\n{human_baseline_summary}')

print(dash_line)

print(f'ORIGINAL MODEL:\n{original_model_text_output}')

print(dash_line)

print(f'INSTRUCT MODEL:\n{instruct_model_text_output}')

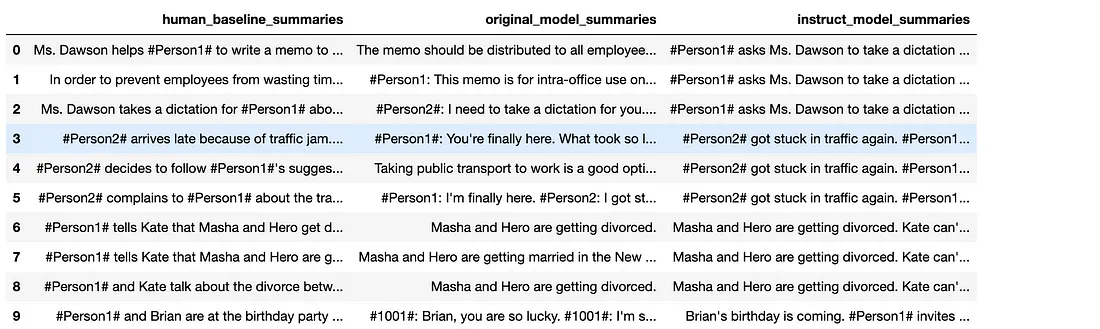

用 ROUGE 分数评估模型,这对文本摘要任务来说更有效。为了更好地理解,让我们只评估 10 个输入,并将其保存为数据帧,以计算 ROUGE 分数,如下所示:

rouge = evaluate.load('rouge')

dialogues = dataset['test'][0:10]['dialogue']

human_baseline_summaries = dataset['test'][0:10]['summary']

original_model_summaries = []

instruct_model_summaries = []

for _, dialogue in enumerate(dialogues):

prompt = f"""

Summarize the following conversation.

{dialogue}

Summary: """

input_ids = tokenizer(prompt, return_tensors="pt").input_ids

original_model_outputs = original_model.generate(input_ids=input_ids, generation_config=GenerationConfig(max_new_tokens=200))

original_model_text_output = tokenizer.decode(original_model_outputs[0], skip_special_tokens=True)

original_model_summaries.append(original_model_text_output)

instruct_model_outputs = instruct_model.generate(input_ids=input_ids, generation_config=GenerationConfig(max_new_tokens=200))

instruct_model_text_output = tokenizer.decode(instruct_model_outputs[0], skip_special_tokens=True)

instruct_model_summaries.append(instruct_model_text_output)

zipped_summaries = list(zip(human_baseline_summaries, original_model_summaries, instruct_model_summaries))

df = pd.DataFrame(zipped_summaries, columns = ['human_baseline_summaries', 'original_model_summaries', 'instruct_model_summaries'])

df

让我们用 ROUGE 分数比较法来比较完全微调模型和原始模型。

original_model_results = rouge.compute(

predictions=original_model_summaries,

references=human_baseline_summaries[0:len(original_model_summaries)],

use_aggregator=True,

use_stemmer=True,

)

instruct_model_results = rouge.compute(

predictions=instruct_model_summaries,

references=human_baseline_summaries[0:len(instruct_model_summaries)],

use_aggregator=True,

use_stemmer=True,

)

print('ORIGINAL MODEL:')

print(original_model_results)

print('INSTRUCT MODEL:')

print(instruct_model_results)

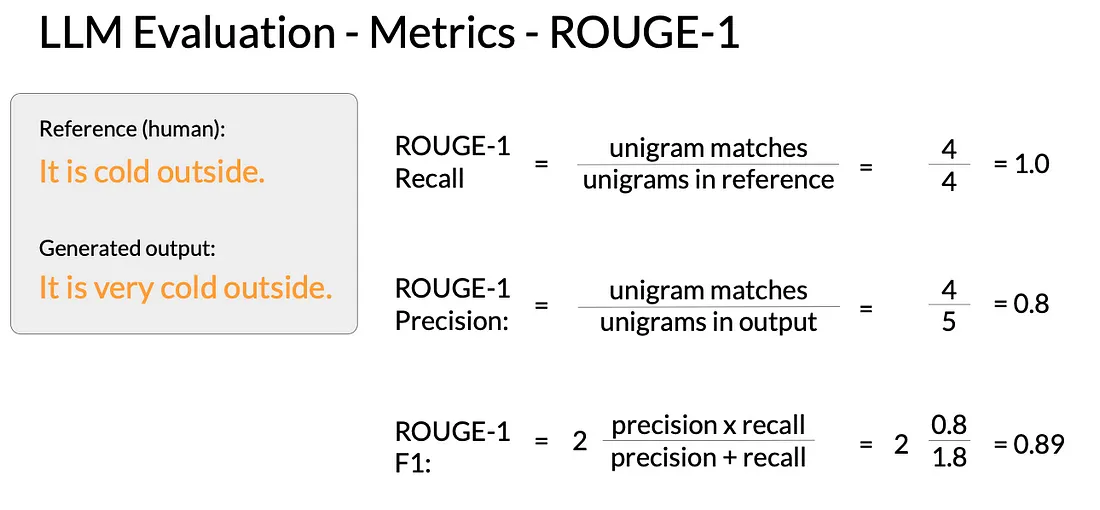

在进入指标比较输出之前,让我们先了解一下 ROUGE 分数的工作原理

ROUGE - Recall Oriented Under Gisting Evaluation 主要用于文本摘要任务。评估是通过将摘要与一个或多个参考摘要进行比较来完成的。ROUGE 分数表示为 ROUGE1、ROUGE2......、ROUGEn

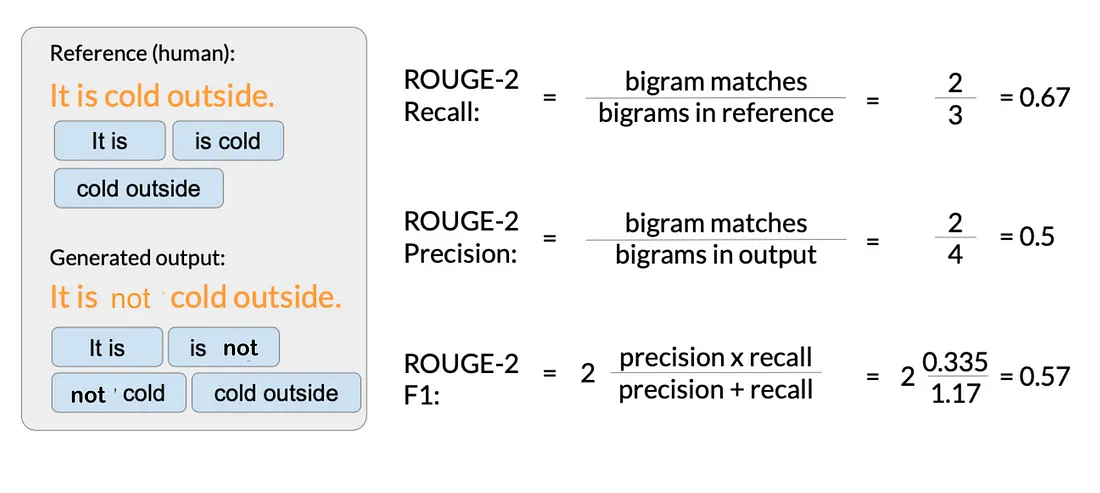

ROUGE1 是单字符串得分,我们可以使用召回率、精确度和 F1 进行类似于其他机器学习任务的简单度量计算。例如,召回率度量的是参考文献和生成输出之间匹配的单词或单字的数量除以参考文献中单词或单字的数量。下面是 ROUGE-1 中所有指标的示例,通常 F1 是使用最多的指标。

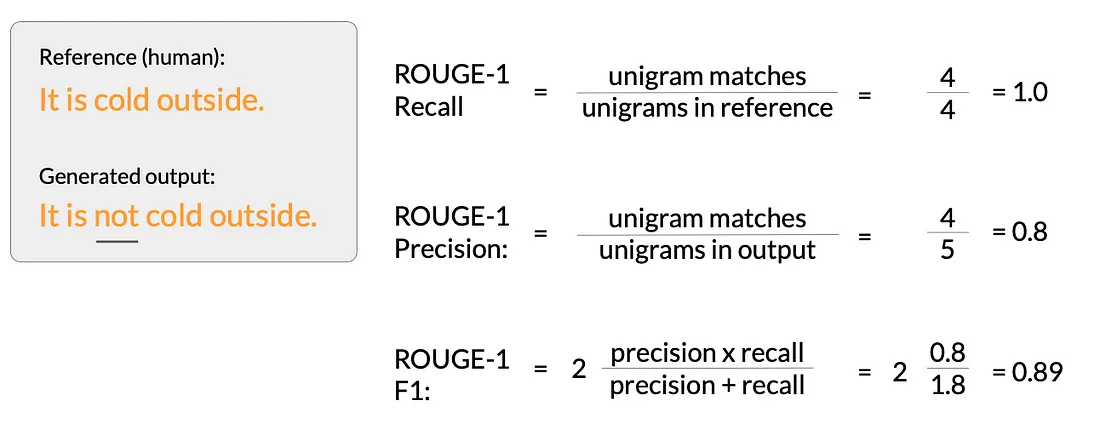

这些都是非常基本的度量标准,只关注单个单词,因此名称中出现了 “一个”,而没有考虑单词的排序,无法衡量性能,如下所示

使用 ROUGE2 可以提供以下帮助

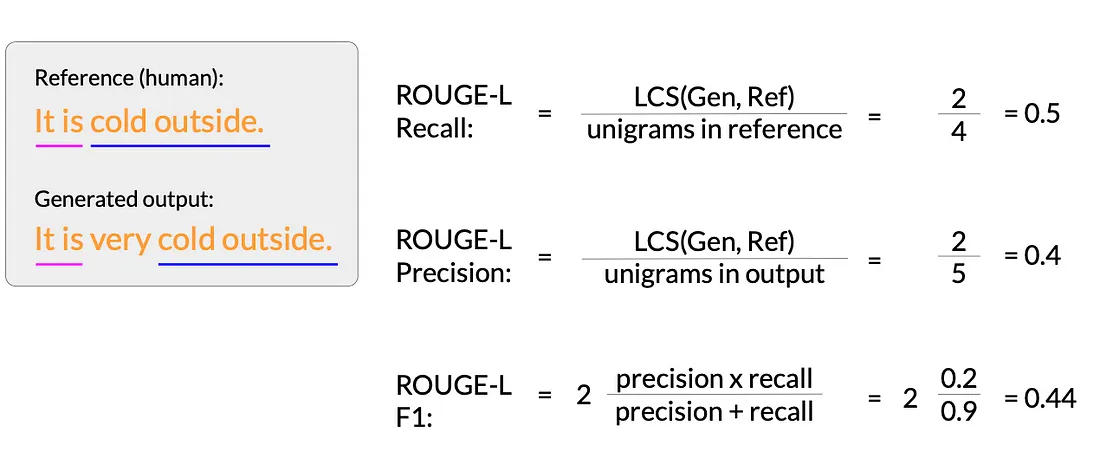

这样,我们就可以计算出 ROUGEn 分数。此外,我们还有 ROUGE-L score,即首先找到最小公共子序列(LCS)。在本例中,上述两个句子的 LCS 为 2,它们分别是 “It is”(“这是”)和 “cold outside”(“外面很冷”)。

ROUGE-L 分数的计算公式为

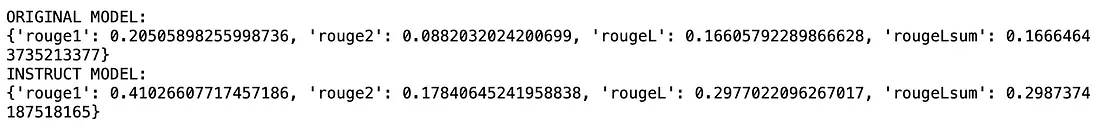

通过这些指标,我们对完全微调模型与原始模型进行了比较评估,得分如下

从所有指标来看,我们发现在牺牲 GPU 内存、训练时间和灾难性遗忘的情况下,完全微调模型能更好地完成训练任务。