使用LoRA微调Llama-3.1-8B以实现函数调用

介绍

对大型语言模型(LLM)进行微调已成为将预训练模型适应特定任务和领域的关键步骤。虽然预训练模型提供了令人印象深刻的一般能力,但微调使我们能够针对特定用例(如翻译或函数调用)、特定领域任务(如法律或金融)以及自定义应用对它们进行优化。与大型语言模型合作时面临的主要挑战之一是微调所需的计算资源。传统的微调方法需要大量的GPU内存和计算时间。这正是LoRA(低秩适应)和Unsloth等框架发挥作用的地方,它们使微调过程更加高效且易于访问。

在本文中,我们将探索如何使用Unsloth(一种专为优化和微调大型语言模型设计的专用工具包)对Llama-3.1–8B模型进行微调,以增强其函数调用能力。我们将利用LoRA实现高效的参数更新,集成Weights & Biases(W&B)进行实验跟踪,并在稍后使用vLLM进行高性能模型推理和服务。

为什么函数调用对语言模型很重要

语言模型中的函数调用使人工智能能够直接与外部系统交互并自主执行现实世界的任务。通过集成外部函数和API,开发人员可以构建不仅仅生成文本的应用程序——它们还可以解决特定问题、检索信息并根据用户输入执行操作。

在较小的语言模型(SLM)中,函数调用尤其有价值,因为它允许这些模型在更易获取的硬件上高效地处理函数调用任务。在这里,函数调用能力可以实现以下任务:

- 将自然语言转换为API调用或生成有效的数据库查询。

- 构建与实时数据交互的对话知识检索系统。

与大型模型不同,当适当微调时,SLM可以用更少的资源实现这些交互。像LoRA这样的技术使我们能够在不需要大量内存的情况下添加特定任务的功能,从而使SLM适用于实时应用。

工具和技术概述

Unsloth

一个优化的LLM微调框架,提供:

- 高达30倍的训练速度和60%的内存使用量减少

- 支持多种硬件设置(NVIDIA、AMD和Intel GPU)

- 智能权重优化技术以提高内存效率

- 与流行的微调方法(如Flash-Attention 2)集成

- 与主要LLM(如Mistral、Llama、Gemma)兼容

- 在本地GPU和Google Colab上高效运行

LoRA(低秩适应)

一种参数高效的微调方法,通过向现有权重添加小的可训练秩分解矩阵来减少内存需求,从而实现特定任务的适应,而无需修改所有模型参数。

Weights & Biases(W&B)

一个监控平台,用于跟踪训练指标、可视化性能和管理实验。

vLLM

一个开源库,引入了创新的技术,如PagedAttention和连续批处理,以优化内存使用并提高吞吐量,从而实现高效的LLM服务和推理优化。

微调与部署分步指南

设置Weights & Biases

为了监控和记录模型的微调过程,我们首先配置W&B。下面的函数处理身份验证并使用指定的项目和运行名称初始化一个新的运行。

import os

import wandb

from dotenv import load_dotenv

load_dotenv()

def setup_wandb(project_name: str, run_name: str):

# Set up your API KEY

try:

api_key = os.getenv("WANDB_API_KEY")

wandb.login(key=api_key)

print("Successfully logged into WandB.")

except KeyError:

raise EnvironmentError("WANDB_API_KEY is not set in the environment variables.")

except Exception as e:

print(f"Error logging into WandB: {e}")

# Optional: Log models

os.environ["WANDB_LOG_MODEL"] = "checkpoint"

os.environ["WANDB_WATCH"] = "all"

os.environ["WANDB_SILENT"] = "true"

# Initialize the WandB run

try:

wandb.init(project=project_name, name=run_name)

print(f"WandB run initialized: Project - {project_name}, Run - {run_name}")

except Exception as e:

print(f"Error initializing WandB run: {e}")

setup_wandb(project_name="<project_name>", run_name="<run_name>")

HuggingFace 认证

为了下载 Salesforce 提供的函数调用数据集,并在之后上传微调后的模型,我们首先需要从环境变量中安全加载并验证 Hugging Face 令牌,以认证我们对 Hugging Face Hub 的访问权限。

from huggingface_hub import login

hf_token = os.getenv("HUGGINGFACE_TOKEN")

if hf_token is None:

raise EnvironmentError("HUGGINGFACE_TOKEN is not set in the environment variables.")

login(hf_token)

加载基础模型

此设置使用 Unsloth 的 FastLanguageModel 加载 Llama-3.1–8B-Instruct 模型及其分词器,具有以下特点:

- 可配置序列长度(max_seq_length=2048)指定了模型能够处理的最大输入序列长度,通常称为模型的上下文长度。

- 自动数据类型检测(dtype=None)以实现灵活的优化。

- 4位量化选项(load_in_4bit=False),允许使用默认精度以提高模型保真度。

import torch

from unsloth import FastLanguageModel

max_seq_length = 2048 # Unsloth auto supports RoPE Scaling internally!

dtype = None # None for auto detection

load_in_4bit = False # Use 4bit quantization to reduce memory usage. Can be False.

model, tokenizer = FastLanguageModel.from_pretrained(

model_name = "unsloth/Meta-Llama-3.1-8B-Instruct",

max_seq_length = max_seq_length,

dtype = dtype,

load_in_4bit = load_in_4bit,

)

配置LoRA以实现高效微调

此代码使用LoRA为Llama-3.1模型配置参数高效微调(PEFT),通过对特定组件而非整个模型进行适应,实现选定层的高效微调,同时最大限度地减少内存使用并加速训练。以下是每个关键参数的详细说明:

- r=16:设置LoRA矩阵的秩,以平衡模型性能与内存使用。

- target_modules:确定要进行目标微调的层,如"q_proj"和"k_proj"。

- lora_alpha=16:控制缩放因子以避免过拟合。

- lora_dropout=0:将dropout设置为零以确保训练的一致性。

- use_gradient_checkpointing="unsloth":最大限度地减少内存使用,尤其是对于长上下文长度的情况。

- bias="none":省略额外的偏置项。

- random_state=3407:确保训练运行的可重复性。

- use_rslora=False:禁用秩敏感LoRA,以优化标准、较不复杂的任务。

- loftq_config=None:禁用LoftQ,否则它将使用高级初始化来提高准确性,但会以开始时更高的内存为代价。

此设置允许在保持模型性能的同时进行更资源高效的微调。

model = FastLanguageModel.get_peft_model(

model,

r=16, # LoRA rank - suggested values: 8, 16, 32, 64, 128

target_modules=["q_proj", "k_proj", "v_proj", "o_proj",

"gate_proj", "up_proj", "down_proj"],

lora_alpha=16,

lora_dropout=0, # Supports any, but = 0 is optimized

bias="none", # Supports any, but = "none" is optimized

use_gradient_checkpointing="unsloth", # Ideal for long context tuning

random_state=3407,

use_rslora=False, # Disable rank-sensitive LoRA for simpler tasks

loftq_config=None # No LoftQ, for standard fine-tuning

)

加载和处理数据集

为了我们的训练,我们将使用Salesforce/xlam-function-calling-60k数据集,该数据集是专门为函数调用任务设计的。

在开始微调之前,我们将先从数据集中选取一个可管理的子集,包含15K个样本,而不是使用整个数据集。这样做可以让我们在早期就评估模型的性能,并更有效地进行调整。样本量在10–20K之间达到了一个良好的平衡:它既足够大,能够提供有意义的见解,同时又能保持内存和训练时间要求在一个合理的范围内。

from datasets import load_dataset

# Loading the dataset

dataset = load_dataset("Salesforce/xlam-function-calling-60k", split="train", token=hf_token)

# Selecting a subset of 15K samples for fine-tuning

dataset = dataset.select(range(15000))

print(f"Using a sample size of {len(dataset)} for fine-tuning.")

使用Unsloth的聊天模板,我们将原始数据转换为模型兼容的标记(tokens)。这一步将函数调用的提示标准化,使模型能够以结构化的方式理解和预测输出。

from unsloth.chat_templates import get_chat_template

# Initialize the tokenizer with the chat template and mapping

tokenizer = get_chat_template(

tokenizer,

chat_template = "llama-3",

mapping = {"role" : "from", "content" : "value", "user" : "human", "assistant" : "gpt"}, # ShareGPT style

map_eos_token = True, # Maps <|im_end|> to <|eot_id|> instead

)

def formatting_prompts_func(examples):

convos = []

# Iterate through each item in the batch (examples are structured as lists of values)

for query, tools, answers in zip(examples['query'], examples['tools'], examples['answers']):

tool_user = {

"content": f"You are a helpful assistant with access to the following tools or function calls. Your task is to produce a sequence of tools or function calls necessary to generate response to the user utterance. Use the following tools or function calls as required:\n{tools}",

"role": "system"

}

ques_user = {

"content": f"{query}",

"role": "user"

}

assistant = {

"content": f"{answers}",

"role": "assistant"

}

convos.append([tool_user, ques_user, assistant])

texts = [tokenizer.apply_chat_template(convo, tokenize=False, add_generation_prompt=False) for convo in convos]

return {"text": texts}

# Apply the formatting on dataset

dataset = dataset.map(formatting_prompts_func, batched = True,)

定义训练参数

TrainingArguments设置定义了微调模型的超参数和日志记录配置,有助于在良好控制的步骤下保持高效的训练。每个参数都在优化模型行为和有效监控进度方面发挥着作用。

from transformers import TrainingArguments

args = TrainingArguments(

per_device_train_batch_size = 8, # Controls the batch size per device

gradient_accumulation_steps = 2, # Accumulates gradients to simulate a larger batch

warmup_steps = 5,

learning_rate = 2e-4, # Sets the learning rate for optimization

num_train_epochs = 3,

fp16 = not torch.cuda.is_bf16_supported(),

bf16 = torch.cuda.is_bf16_supported(),

optim = "adamw_8bit",

weight_decay = 0.01, # Regularization term for preventing overfitting

lr_scheduler_type = "linear", # Chooses a linear learning rate decay

seed = 3407,

output_dir = "outputs",

report_to = "wandb", # Enables Weights & Biases (W&B) logging

logging_steps = 1, # Sets frequency of logging to W&B

logging_strategy = "steps", # Logs metrics at each specified step

save_strategy = "no",

load_best_model_at_end = True, # Loads the best model at the end

save_only_model = False # Saves entire model, not only weights

)

使用SFTTrainer和Unsloth进行训练

SFTTrainer被配置为用于监督微调,具有自定义分词、数据集预处理和内存优化功能。与unsloth_train结合使用,将能够利用Unsloth优化的梯度检查点技术,这对于处理长序列和减少内存使用至关重要。

from trl import SFTTrainer

trainer = SFTTrainer(

model = model,

processing_class = tokenizer,

train_dataset = dataset,

dataset_text_field = "text",

max_seq_length = max_seq_length,

dataset_num_proc = 2,

packing = False, # Can make training 5x faster for short sequences.

args = args

)

这段代码在训练开始时捕获了初始的GPU内存状态。

# Show current memory stats

gpu_stats = torch.cuda.get_device_properties(0)

start_gpu_memory = round(torch.cuda.max_memory_reserved() / 1024 / 1024 / 1024, 3)

max_memory = round(gpu_stats.total_memory / 1024 / 1024 / 1024, 3)

print(f"GPU = {gpu_stats.name}. Max memory = {max_memory} GB.")

print(f"{start_gpu_memory} GB of memory reserved.")

既然我们已经完成了设置,现在就开始训练我们的模型吧。

from unsloth import unsloth_train

trainer_stats = unsloth_train(trainer)

print(trainer_stats)

wandb.finish()

训练过程结束后,以下代码将检查和比较最终的内存使用情况,特别捕获用于LoRA训练的内存,并计算内存百分比。

# Show final memory and time stats

used_memory = round(torch.cuda.max_memory_reserved() / 1024 / 1024 / 1024, 3)

used_memory_for_lora = round(used_memory - start_gpu_memory, 3)

used_percentage = round(used_memory /max_memory*100, 3)

lora_percentage = round(used_memory_for_lora/max_memory*100, 3)

print(f"{trainer_stats.metrics['train_runtime']} seconds used for training.")

print(f"{round(trainer_stats.metrics['train_runtime']/60, 2)} minutes used for training.")

print(f"Peak reserved memory = {used_memory} GB.")

print(f"Peak reserved memory for training = {used_memory_for_lora} GB.")

print(f"Peak reserved memory % of max memory = {used_percentage} %.")

print(f"Peak reserved memory for training % of max memory = {lora_percentage} %.")

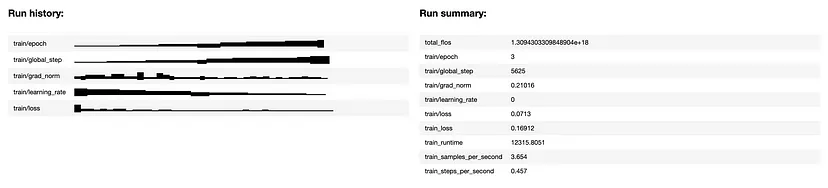

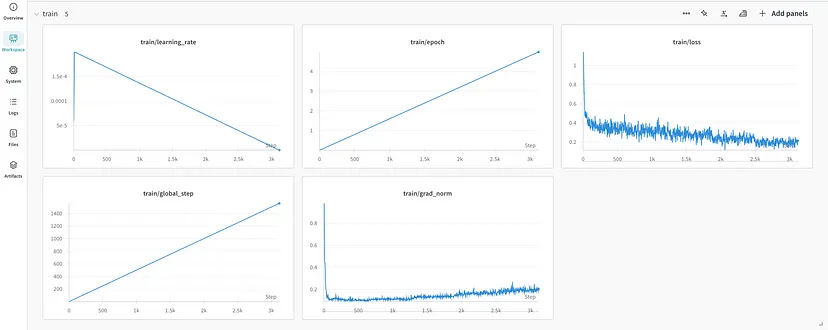

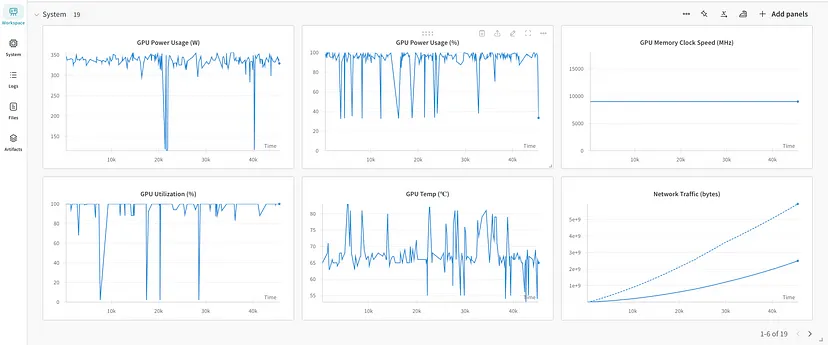

我们可以在Weights & Biases上可视化训练指标和系统指标,如内存使用情况、训练持续时间、训练损失和准确性,以更好地了解模型随时间推移的性能表现。

保存和部署模型

训练完成后,将微调后的模型本地保存,并推送到Hugging Face的hub上,以便后续访问和部署。然而,这里只保存了LoRA适配器。

# Local saving

model.save_pretrained("<lora_model_name>")

tokenizer.save_pretrained("<lora_model_name>")

# Online saving

model.push_to_hub("<hf_username/lora_model_name>", token = hf_token)

tokenizer.push_to_hub("<hf_username/lora_model_name>", token = hf_token)

为了将LoRA适配器与基础模型合并,并将模型保存为16位精度以优化vLLM的性能,请使用:

# Merge to 16bit

model.save_pretrained_merged("<model_name>", tokenizer, save_method = "merged_16bit",)

model.push_to_hub_merged("<hf_username/model_name>", tokenizer, save_method = "merged_16bit", token = hf_token)

微调模型评估

我们将首先加载我们的微调模型,该模型可以保存在本地磁盘上或从Hugging Face中检索,同时加载分词器。

from unsloth import FastLanguageModel

from transformers import TextStreamer

max_seq_length = 2048

dtype = None

load_in_4bit = False

model, tokenizer = FastLanguageModel.from_pretrained(

model_name = "<model_name>", # Trained model either locally or from huggingface

max_seq_length = max_seq_length,

dtype = dtype,

load_in_4bit = load_in_4bit,

)

FastLanguageModel.for_inference(model) # Enable native 2x faster inference

我们现在可以定义用于数据检索的实用函数,以提升用户体验。在本文中,我们将出于演示目的使用以下函数:

- get_current_date:返回当前日期,格式为'YYYY-MM-DD'。

- get_current_weather:使用OpenWeatherMap API检索指定位置的天气数据。

- celsius_to_fahrenheit:将温度从摄氏度转换为华氏度。

- get_nasa_picture_of_the_day:获取NASA每日图片的详细信息。

- get_stock_price:使用Alpha Vantage的数据提供指定股票代码和日期的股票价格。

import re

import json

import requests

from datetime import datetime

import nasapy

WEATHER_API_KEY = os.getenv("WEATHER_API_KEY")

NASA_API_KEY = os.getenv("NASA_API_KEY")

STOCK_API_KEY = os.getenv("STOCK_API_KEY")

def get_current_date() -> str:

"""

Fetches the current date in the format YYYY-MM-DD.

Returns:

str: A string representing the current date.

"""

print("Getting the current date")

try:

current_date = datetime.now().strftime("%Y-%m-%d")

return current_date

except Exception as e:

print(f"Error fetching current date: {e}")

return "NA"

def get_current_weather(location: str) -> dict:

"""

Fetches the current weather for a given location (default: San Francisco).

Args:

location (str): The name of the city for which to retrieve the weather information.

Returns:

dict: A dictionary containing weather information such as temperature, weather description, and humidity.

"""

print(f"Getting current weather for {location}")

try:

weather_url = f"http://api.openweathermap.org/data/2.5/weather?q={location}&appid={WEATHER_API_KEY}&units=metric"

weather_data = requests.get(weather_url)

data = weather_data.json()

weather_description = data["weather"][0]["description"]

temperature = data["main"]["temp"]

humidity = data["main"]["humidity"]

return {

"description": weather_description,

"temperature": temperature,

"humidity": humidity

}

except Exception as e:

print(f"Error fetching weather data: {e}")

return {"weather": "NA"}

def celsius_to_fahrenheit(celsius: float) -> float:

"""

Converts a temperature from Celsius to Fahrenheit.

Args:

celsius (float): Temperature in degrees Celsius.

Returns:

float: Temperature in degrees Fahrenheit.

"""

print(f"Converting {celsius}°C to Fahrenheit")

try:

fahrenheit = (celsius * 9/5) + 32

return fahrenheit

except Exception as e:

print(f"Error converting temperature: {e}")

return None

def get_nasa_picture_of_the_day(date: str) -> dict:

"""

Fetches NASA's Picture of the Day information for a given date.

Args:

date (str): The date for which to retrieve the picture in 'YYYY-MM-DD' format.

Returns:

dict: A dictionary containing the title, explanation, and URL of the image or video.

"""

print(f"Getting NASA's Picture of the Day for {date}")

try:

nasa = nasapy.Nasa(key = NASA_API_KEY)

apod = nasa.picture_of_the_day(date = date, hd=True)

title = apod.get("title", "No Title")

explanation = apod.get("explanation", "No Explanation")

url = apod.get("url", "No URL")

return {

"title": title,

"explanation": explanation,

"url": url

}

except Exception as e:

print(f"Error fetching NASA's Picture of the Day: {e}")

return {"error": "Unable to fetch NASA Picture of the Day"}

def get_stock_price(ticker: str, date: str) -> tuple[str, str]:

"""

Retrieves the lowest and highest stock prices for a given ticker and date.

Args:

ticker (str): The stock ticker symbol, e.g., "IBM".

date (str): The date in "YYYY-MM-DD" format for which you want to get stock prices.

Returns:

tuple: A tuple containing the low and high stock prices on the given date, or ("none", "none") if not found.

"""

print(f"Getting stock price for {ticker} on {date}")

try:

stock_url = f"https://www.alphavantage.co/query?function=TIME_SERIES_DAILY&symbol={ticker}&apikey={STOCK_API_KEY}"

stock_data = requests.get(stock_url)

stock_low = stock_data.json()["Time Series (Daily)"][date]["3. low"]

stock_high = stock_data.json()["Time Series (Daily)"][date]["2. high"]

return stock_low, stock_high

except Exception as e:

print(f"Error fetching stock data: {e}")

return "none", "none"

available_function_calls = {"get_current_date": get_current_date, "get_current_weather": get_current_weather, "celsius_to_fahrenheit": celsius_to_fahrenheit,

"get_nasa_picture_of_the_day": get_nasa_picture_of_the_day, "get_stock_price": get_stock_price}

接下来,我们将创建一个包含可用函数及其函数定义的列表。下面的代码定义了可用函数的元数据,包括它们的名称、描述和所需参数。这对于将函数集成到类似聊天的界面中至关重要,因为在这种界面中,模型可以根据用户查询理解要调用哪些函数。

functions = [

{

"name": "get_current_date",

"description": "Fetches the current date in the format YYYY-MM-DD.",

"parameters": {

"type": "object",

"properties": {},

"required": [],

},

},

{

"name": "get_current_weather",

"description": "Get the current weather",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and country code, e.g. San Francisco, US",

}

},

"required": ["location"],

},

},

{

"name": "celsius_to_fahrenheit",

"description": "Converts a temperature from Celsius to Fahrenheit.",

"parameters": {

"type": "object",

"properties": {

"celsius": {

"type": "number",

"description": "Temperature in degrees Celsius.",

}

},

"required": ["celsius"],

}

},

{

"name": "get_nasa_picture_of_the_day",

"description": "Fetches NASA's Picture of the Day information for a given date.",

"parameters": {

"type": "object",

"properties": {

"date": {

"type": "string",

"description": "Date in YYYY-MM-DD format for which to retrieve the picture.",

}

},

"required": ["date"],

},

},

{

"name": "get_stock_price",

"description": "Retrieves the lowest and highest stock price for a given ticker symbol and date. The ticker symbol must be a valid symbol for a publicly traded company on a major US stock exchange like NYSE or NASDAQ. The tool will return the latest trade price in USD.",

"parameters": {

"type": "object",

"properties": {

"ticker": {

"type": "string",

"description": "The stock ticker symbol, e.g. AAPL for Apple Inc.",

},

"date": {

"type": "string",

"description": "Date in YYYY-MM-DD format",

}

},

"required": ["ticker", "date"],

},

}

]

available_tools_list = {

"functions_str": [json.dumps(x) for x in functions],

}

在这段代码中,我们指定了用户问题,并定义了一组结构化的聊天消息,以及一个可用函数的JSON列表,这些都将用于分词器的聊天模板中,以供模型处理。

query = "What is the current weather at the headquarters of IBM? Also, can you provide the stock prices for the company on October 29, 2024?"

chat = [

{"role":"system","content": f"You are a helpful assistant with access to the following function calls. Your task is to produce a sequence of function calls necessary to generate response to the user utterance. Use the following function calls as required.\n{available_tools_list}"},

{"role": "user", "content": query }

]

然后,模型根据用户查询的意图(如生成响应中的函数调用名称所示)来确定适当的函数调用。

inputs = tokenizer.apply_chat_template(

chat,

tokenize = True,

add_generation_prompt = True, # Must add for generation

return_tensors = "pt",

).to("cuda")

outputs = model.generate(input_ids = inputs, max_new_tokens = 1024, use_cache = True)

response = tokenizer.batch_decode(outputs)[0]

print(response)

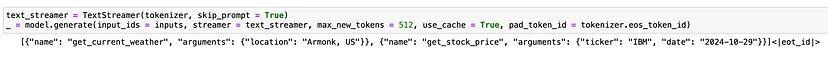

我们还可以利用TextStreamer类来流式传输生成的文本输出,从而实现实时响应流式传输。

text_streamer = TextStreamer(tokenizer, skip_prompt = True)

_ = model.generate(input_ids = inputs, streamer = text_streamer, max_new_tokens = 512, use_cache = True, pad_token_id = tokenizer.eos_token_id)

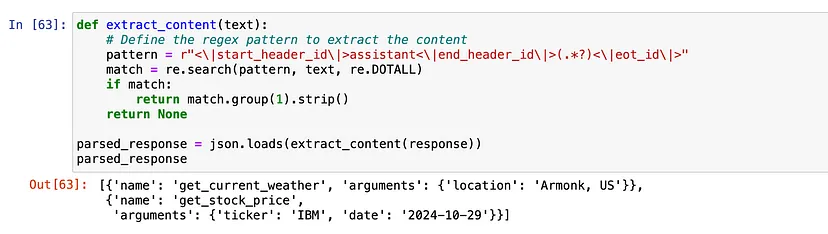

既然我们的模型已经告诉我们需要调用哪些函数以及使用哪些参数,我们就可以执行这些函数,并将它们的输出传回给大型语言模型(LLM),以便它可以生成最终回答给用户。

为了有效地执行这个函数,我们将从模型的输出中提取相关的参数,确保我们拥有无缝执行所需的所有详细信息。这种方法使我们能够根据用户输入动态地利用所选函数,从而提升整体交互体验。

def extract_content(text):

# Define the regex pattern to extract the content

pattern = r"<\|start_header_id\|>assistant<\|end_header_id\|>(.*?)<\|eot_id\|>"

match = re.search(pattern, text, re.DOTALL)

if match:

return match.group(1).strip()

return None

parsed_response = json.loads(extract_content(response))

print(parsed_response)

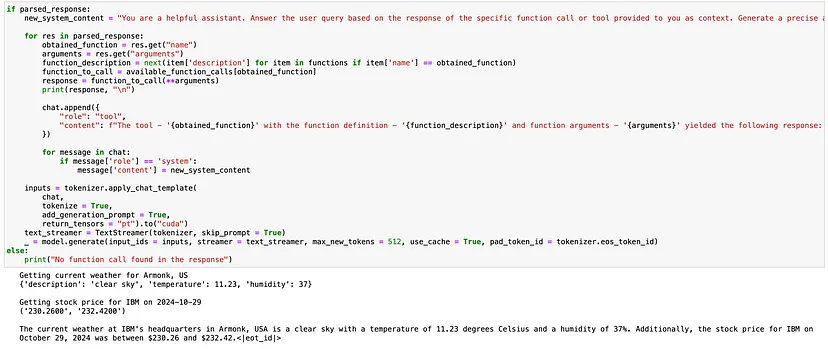

下面的代码通过执行必要的函数调用来处理解析后的响应,以根据用户查询收集信息。每个函数调用的结果都被附加到聊天历史中,并且系统消息被更新以反映其当前状态。然后,再次提示模型根据从函数调用中收集的信息生成最终响应。

if parsed_response:

new_system_content = "You are a helpful assistant. Answer the user query based on the response of the specific function call or tool provided to you as context. Generate a precise answer for given user query, synthesizing the provided information."

for res in parsed_response:

obtained_function = res.get("name")

arguments = res.get("arguments")

function_description = next(item['description'] for item in functions if item['name'] == obtained_function)

function_to_call = available_function_calls[obtained_function]

response = function_to_call(**arguments)

print(response)

chat.append({

"role": "tool",

"content": f"The tool - '{obtained_function}' with the function definition - '{function_description}' and function arguments -'{arguments}' yielded the following response: {response}\n."

})

for message in chat:

if message['role'] == 'system':

message['content'] = new_system_content

inputs = tokenizer.apply_chat_template(

chat,

tokenize = True,

add_generation_prompt = True,

return_tensors = "pt").to("cuda")

text_streamer = TextStreamer(tokenizer, skip_prompt = True)

_ = model.generate(input_ids = inputs, streamer = text_streamer, max_new_tokens = 512, use_cache = True, pad_token_id = tokenizer.eos_token_id)

else:

print("No function call found in the response")

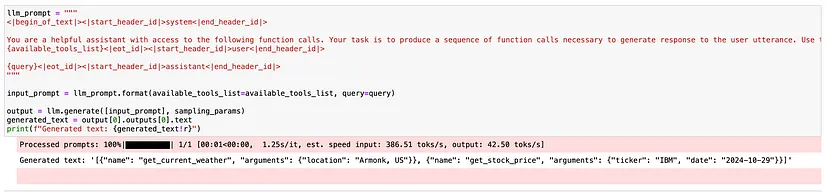

为快速推理设置vLLM

此代码通过加载我们保存的模型并使用指定参数进行初始化,来配置vLLM框架以实现高吞吐量和内存高效的推理。

from vllm import LLM

from vllm.sampling_params import SamplingParams

model_name = "<hf_username/model_name>"

sampling_params = SamplingParams(max_tokens=768)

llm = LLM(

model=model_name,

max_model_len=2048,

tokenizer_mode="auto",

tensor_parallel_size=1,

enforce_eager=True,

gpu_memory_utilization=0.95

)

llm_prompt = """

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

You are a helpful assistant with access to the following function calls. Your task is to produce a sequence of function calls necessary to generate response to the user utterance. Use the following function calls as required.

{available_tools_list}<|eot_id|><|start_header_id|>user<|end_header_id|>

{query}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

"""

input_prompt = llm_prompt.format(available_tools_list=available_tools_list, query=query)

output = llm.generate([input_prompt], sampling_params)

generated_text = output[0].outputs[0].text

print(f"Generated text: {generated_text!r}")

结论

使用Unsloth和LoRA对Llama-3.1–8B模型进行微调,可以在优化资源使用的同时,有效地适应自定义领域和特定任务。使用LoRA等技术不仅可以提高微调效率,还可以减少内存消耗,使其成为多种应用的理想选择。

此外,通过整合Weights & Biases(WandB)进行实验跟踪,你可以简化工作流程,并深入了解微调过程。利用vLLM可以确保模型服务的高吞吐量和内存效率,从而在现实场景中实现稳健的性能。