模型:

shi-labs/oneformer_ade20k_swin_large

英文

英文OneFormer

OneFormer模型是在ADE20k数据集上训练的(大尺寸版本,使用Swin骨干网络)。该模型是由Jain等人在论文《 OneFormer: One Transformer to Rule Universal Image Segmentation 》中提出,并于《 this repository 》首次发布的。

模型描述

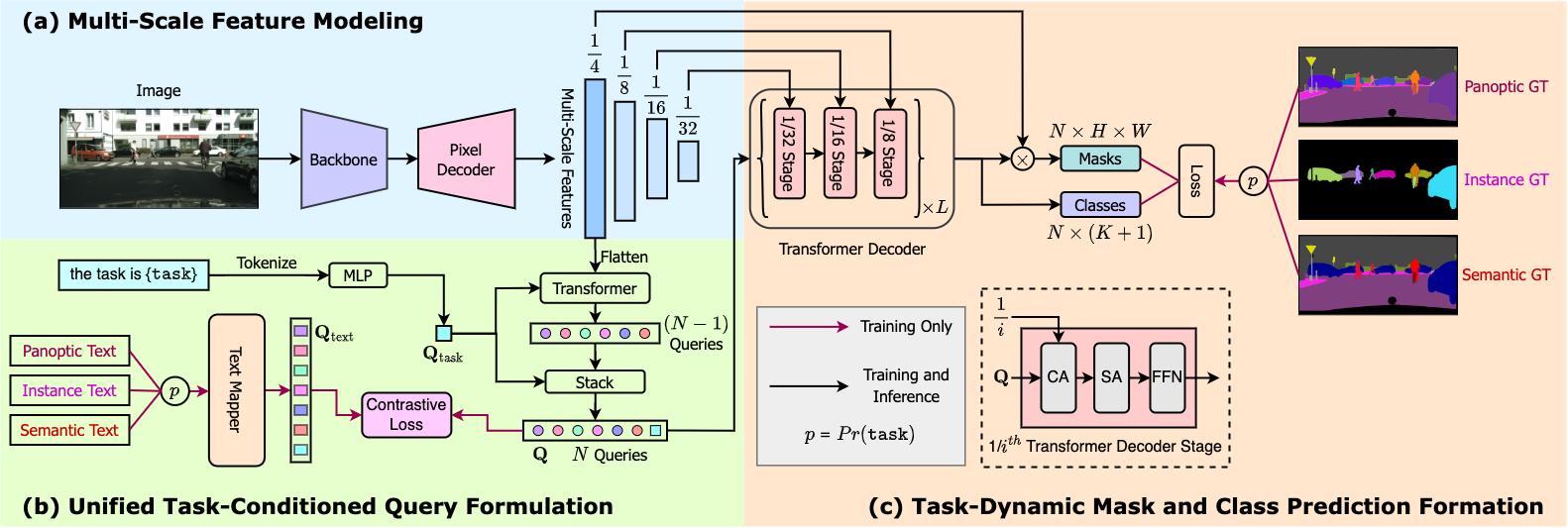

OneFormer是第一个多任务通用图像分割框架。只需使用单一通用架构、单一模型和单一数据集进行一次训练,即可在语义分割、实例分割和全景分割任务上胜过现有的专用模型。OneFormer使用任务标记来对模型进行任务指导的训练,并在推断时进行任务动态调整,所有这些都来自于单一模型。

预期用途与限制

您可以使用此特定的检查点进行语义分割、实例分割和全景分割。查看《 model hub 》以寻找在不同数据集上进行的其他微调版本。

如何使用

这是如何使用该模型的方法:

from transformers import OneFormerProcessor, OneFormerForUniversalSegmentation

from PIL import Image

import requests

url = "https://huggingface.co/datasets/shi-labs/oneformer_demo/blob/main/ade20k.jpeg"

image = Image.open(requests.get(url, stream=True).raw)

# Loading a single model for all three tasks

processor = OneFormerProcessor.from_pretrained("shi-labs/oneformer_ade20k_swin_large")

model = OneFormerForUniversalSegmentation.from_pretrained("shi-labs/oneformer_ade20k_swin_large")

# Semantic Segmentation

semantic_inputs = processor(images=image, task_inputs=["semantic"], return_tensors="pt")

semantic_outputs = model(**semantic_inputs)

# pass through image_processor for postprocessing

predicted_semantic_map = processor.post_process_semantic_segmentation(outputs, target_sizes=[image.size[::-1]])[0]

# Instance Segmentation

instance_inputs = processor(images=image, task_inputs=["instance"], return_tensors="pt")

instance_outputs = model(**instance_inputs)

# pass through image_processor for postprocessing

predicted_instance_map = processor.post_process_instance_segmentation(outputs, target_sizes=[image.size[::-1]])[0]["segmentation"]

# Panoptic Segmentation

panoptic_inputs = processor(images=image, task_inputs=["panoptic"], return_tensors="pt")

panoptic_outputs = model(**panoptic_inputs)

# pass through image_processor for postprocessing

predicted_semantic_map = processor.post_process_panoptic_segmentation(outputs, target_sizes=[image.size[::-1]])[0]["segmentation"]

更多示例,请参阅《 documentation 》。

引用

@article{jain2022oneformer,

title={{OneFormer: One Transformer to Rule Universal Image Segmentation}},

author={Jitesh Jain and Jiachen Li and MangTik Chiu and Ali Hassani and Nikita Orlov and Humphrey Shi},

journal={arXiv},

year={2022}

}