模型:

vinvino02/glpn-kitti

英文

英文GLPN 在 KITTI 上的微调

GLPN(全局-局部路径网络)模型在 KITTI 数据集上进行了微调,用于单目深度估计。该模型由 Kim 等人在论文 Global-Local Path Networks for Monocular Depth Estimation with Vertical CutDepth 中提出,并首次在 this repository 中发布。

免责声明:发布 GLPN 的团队未为此模型编写模型卡片,因此该模型卡片由 Hugging Face 团队编写。

模型描述

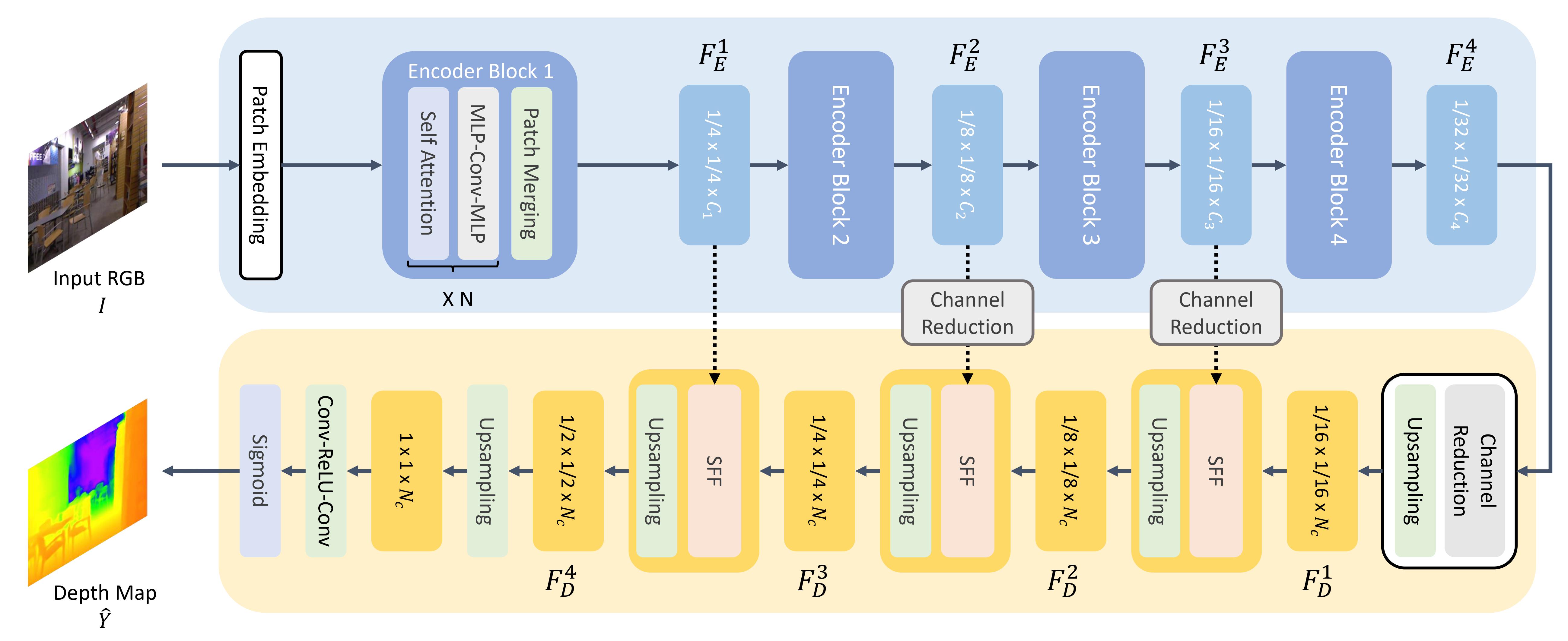

GLPN 使用 SegFormer 作为主干,并在其上方添加了一个轻量级的头部用于深度估计。

预期用途与限制

您可以使用原始模型进行单目深度估计。请参阅 model hub 获取您感兴趣的任务的经过微调的版本。

使用方法

以下是使用该模型的方法:

from transformers import GLPNFeatureExtractor, GLPNForDepthEstimation

import torch

import numpy as np

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

feature_extractor = GLPNFeatureExtractor.from_pretrained("vinvino02/glpn-kitti")

model = GLPNForDepthEstimation.from_pretrained("vinvino02/glpn-kitti")

# prepare image for the model

inputs = feature_extractor(images=image, return_tensors="pt")

with torch.no_grad():

outputs = model(**inputs)

predicted_depth = outputs.predicted_depth

# interpolate to original size

prediction = torch.nn.functional.interpolate(

predicted_depth.unsqueeze(1),

size=image.size[::-1],

mode="bicubic",

align_corners=False,

)

# visualize the prediction

output = prediction.squeeze().cpu().numpy()

formatted = (output * 255 / np.max(output)).astype("uint8")

depth = Image.fromarray(formatted)

有关更多代码示例,请参阅 documentation 。

BibTeX 引用条目和引用信息

@article{DBLP:journals/corr/abs-2201-07436,

author = {Doyeon Kim and

Woonghyun Ga and

Pyunghwan Ahn and

Donggyu Joo and

Sehwan Chun and

Junmo Kim},

title = {Global-Local Path Networks for Monocular Depth Estimation with Vertical

CutDepth},

journal = {CoRR},

volume = {abs/2201.07436},

year = {2022},

url = {https://arxiv.org/abs/2201.07436},

eprinttype = {arXiv},

eprint = {2201.07436},

timestamp = {Fri, 21 Jan 2022 13:57:15 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-2201-07436.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}